Scoped custom element registries can silently break crawlability

Summary

Chrome and Edge 146 now ship scoped custom element registries by default, letting developers isolate element definitions to specific DOM subtrees instead of using the global registry.

Scoped registries risk breaking crawlability because Googlebot may not execute the JavaScript needed to initialize them before crawling ends, leaving critical content unrendered in search results.

Search your codebase for `new CustomElementRegistry` and `shadowrootcustomelementregistry` to find scoped registries, then test rendering in Google's Rich Results Test to confirm above-the-fold content appears in crawled HTML.

What happened

Chrome and Edge 146 now ship scoped custom element registries by default. The feature, developed by the Microsoft Edge team, lets developers create independent CustomElementRegistry instances instead of relying on the single global window.customElements registry. Each scoped registry maintains its own set of custom element definitions, isolated from the global registry and from other scoped registries.

The feature solves a real problem. Large applications that compose UIs from multiple teams or micro-frontend libraries frequently hit naming collisions. If two libraries both define <my-button>, the page throws an error. Scoped registries eliminate that by letting each shadow root, document, or individual element use its own registry.

Registries can be scoped three ways:

- Shadow root scoping: Pass a

customElementRegistryoption when callingattachShadow(). All custom elements inside that shadow root resolve against the scoped registry. - Declarative shadow DOM: Add the

shadowrootcustomelementregistryattribute to a<template>element. The browser reserves space for the registry, and JavaScript defines its elements later via the scoped registry’sdefine()method. - Disconnected documents: Scope a registry to an off-screen document created by

document.implementation.createHTMLDocument(), useful for template cloning and off-screen manipulation.

MDN’s documentation on custom elements confirms the feature and notes that scoped registries can “limit definitions to a particular DOM subtree.”

Why it matters

Googlebot renders pages using a Chromium-based renderer, but it does not execute JavaScript in real time the way a user’s browser does. Content inside shadow DOM has always been harder for crawlers to access. Scoped registries add another layer of indirection.

When a custom element’s definition lives in a scoped registry attached to a shadow root, the element only renders after JavaScript creates the registry, defines the element class, and attaches it. If any step in that chain fails or runs after the crawler’s rendering window closes, the element stays undefined. The browser shows nothing, or shows fallback content.

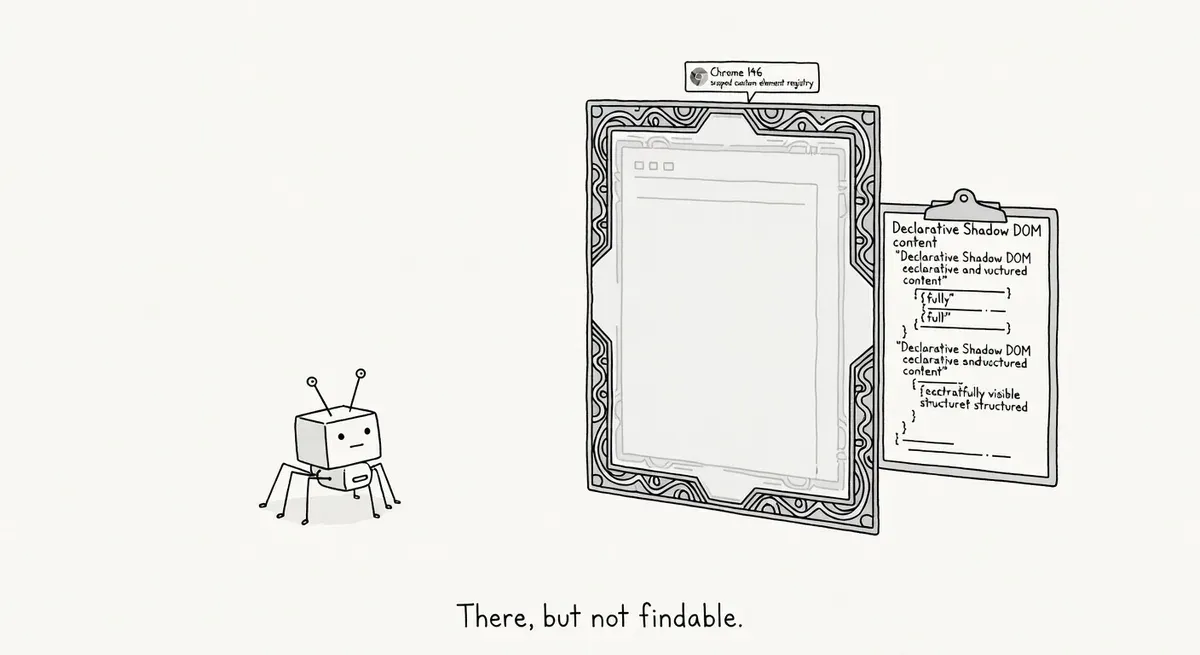

The declarative shadow DOM path is particularly tricky. The shadowrootcustomelementregistry attribute tells the browser that the shadow root uses a scoped registry rather than the global one. Until JavaScript defines elements in the scoped registry and attaches it to that shadow root, the custom elements inside it remain unresolved. Web.dev’s declarative shadow DOM article explains that declarative shadow DOM itself is now baseline across browsers, but the scoped registry extension requires explicit JavaScript initialization.

Micro-frontend architectures are the primary adopters. Sites built with frameworks like single-spa, Module Federation, or custom shell apps often compose pages from independently deployed bundles. Each bundle can now register its own element names without coordination. The tradeoff is that critical content rendered by these scoped elements depends entirely on JavaScript execution order.

For sites where scoped elements render above-the-fold content, product information, or navigational links, the crawlability risk is real. Googlebot may see an empty <my-card></my-card> instead of the rendered output.

What to do

Check whether your site uses scoped custom element registries by searching your codebase for new CustomElementRegistry() and shadowrootcustomelementregistry. If neither appears, no action is needed.

If your site does use scoped registries, test how Googlebot sees the rendered output. Use Google’s Rich Results Test or the URL Inspection tool’s “View Tested Page” to confirm that content inside scoped shadow roots actually renders. Compare the rendered HTML against what you expect.

For content that must be crawlable, avoid placing it exclusively inside scoped-registry shadow roots. Server-side render the critical text content into the light DOM or into declarative shadow DOM that does not depend on scoped registry initialization. The <slot> element can project light DOM children into shadow roots, keeping the text accessible to crawlers even if the component’s JavaScript hasn’t executed.

If you’re using declarative shadow DOM with the shadowrootcustomelementregistry attribute, make sure your SSR pipeline defines the scoped registry’s elements synchronously during hydration. Any delay or conditional loading risks leaving the elements undefined during Googlebot’s render pass.

Monitor Google Search Console’s coverage reports for pages that use micro-frontend composition. Watch for increases in “Excluded” pages or drops in indexed page counts that correlate with deploying scoped registries.

Watch out for

Fallback content is not automatic. Unlike some web component patterns where light DOM children serve as fallback, scoped registry elements that fail to initialize render as empty unknown elements. There is no built-in fallback mechanism.

Mixed registry conflicts during hydration. If your SSR output uses declarative shadow DOM with the shadowrootcustomelementregistry attribute but your client-side JavaScript defines the same element in the global registry instead, the shadow root will not pick up the global definition. The element stays undefined inside the shadow root even though it works everywhere else on the page.