Next.js streaming metadata fails Google indexing

Summary

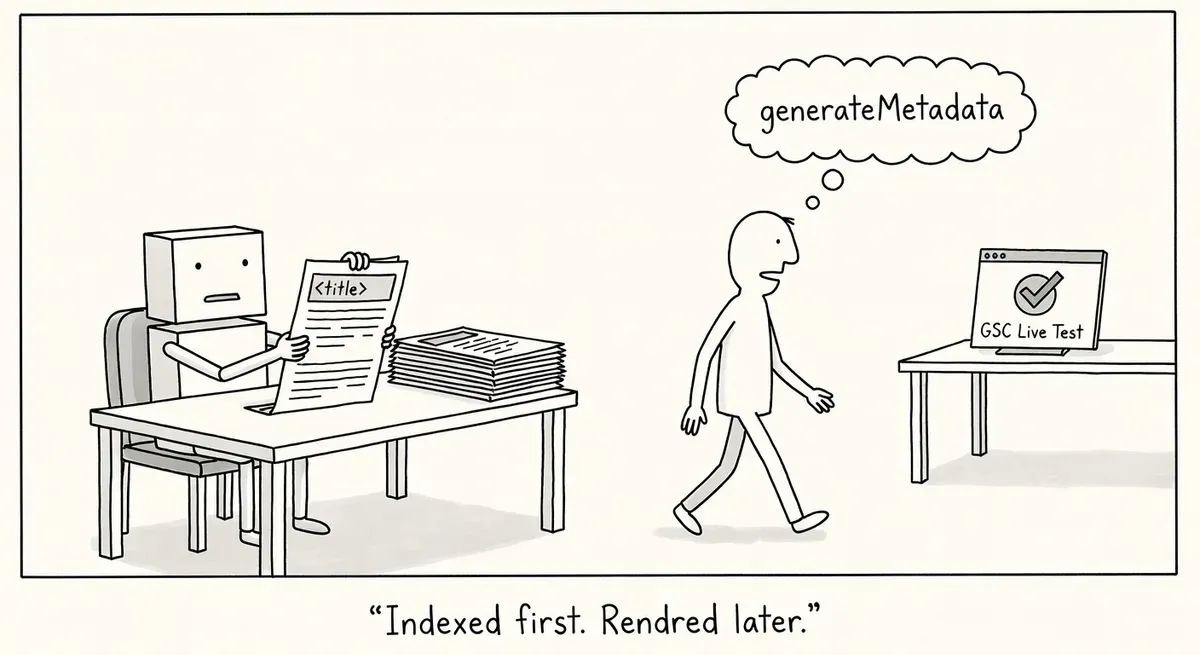

A practitioner found that Next.js streaming metadata left two of three test pages indexed with empty titles, missing canonicals, and no hreflang. Adding Googlebot to htmlLimitedBots didn't fully fix it.

The trap: GSC's Live Test shows everything fine because it runs JS to completion. View Crawled Page shows what Google actually stored.

For critical pages, move metadata to build time. And remember: setting a custom htmlLimitedBots regex replaces the default list, so include AdsBot-Google and Mediapartners-Google in your pattern.

What happened

A practitioner building a site on Next.js reported in r/TechSEO that pages using streaming metadata were indexed by Google with empty <title> tags, missing meta descriptions, missing canonicals, and missing hreflang tags.

Next.js’s generateMetadata function can stream metadata after the initial HTML shell is sent. When the metadata depends on dynamic information, the resolved tags get appended to the <body> rather than appearing in the <head> of the initial server-rendered HTML. The framework includes a config option called htmlLimitedBots that forces blocking (non-streamed) metadata for specific user agents.

The default htmlLimitedBots list includes several Google crawlers like Mediapartners-Google, AdsBot-Google, and Google-PageRenderer. It does not include Googlebot itself. The presumed reasoning is that Googlebot executes JavaScript and can interpret the full DOM, though the documentation does not explicitly state this rationale.

The practitioner added Googlebot to the htmlLimitedBots config to force blocking metadata. Google Search Console’s live test showed the metadata present and correct in rendered HTML. But after submitting three test pages for indexing, the “View Crawled Page” results told a different story. One page had correct metadata in the <head>. The other two had an empty <title> tag in the <head>, with no meta description, no canonical, and no hreflang tags at all.

Why it matters

Google renders JavaScript pages in two waves. The first wave processes the raw HTML response. The second wave runs JavaScript and inspects the rendered DOM. Pages that haven’t gone through the second wave yet will be indexed based on the initial HTML alone.

If critical metadata only arrives via streaming (appended to <body> after the initial shell), it depends entirely on the second rendering wave to be picked up. Any delay or timeout in that second wave means Google indexes the page without titles, canonicals, or hreflang. Google has also stated that certain tags are ignored if they appear outside <head>, which adds another layer of risk when streamed metadata lands in <body>.

The practitioner’s finding suggests that even after adding Googlebot to htmlLimitedBots, the config may not work reliably in all cases. One of three test pages worked correctly while two did not. Possible causes include generateMetadata timing out before the response was sent, or the htmlLimitedBots config not matching the exact user-agent string Googlebot sends.

Sites using Next.js App Router with dynamic metadata are the most exposed. Static metadata defined via the metadata object in layout.js or page.js is not affected because it gets included in the prerendered HTML without streaming.

What to do

Check whether your Next.js site uses generateMetadata with dynamic data. If it does, your metadata may be streamed rather than included in the initial HTML.

Test your pages by viewing the raw HTML response (not the rendered DOM) with curl or wget. If <title>, canonical, and other critical tags are missing or empty in that response, they’re being streamed.

Add Googlebot to your htmlLimitedBots config if you haven’t already:

// next.config.ts

import type { NextConfig } from 'next'

const config: NextConfig = {

htmlLimitedBots: /Googlebot|Mediapartners-Google|AdsBot-Google|Google-PageRenderer/,

}

export default configNote that setting htmlLimitedBots overrides the default list entirely. Include the default bots alongside Googlebot in your regex.

If you want to eliminate streaming metadata risk completely, you can match all user agents:

htmlLimitedBots: /.*/,After making changes, use Google Search Console’s URL Inspection tool to request indexing on a few test pages. Compare the “Crawled Page” HTML against what you expect. Pay attention to whether <title>, meta description, canonical, and hreflang tags appear in the <head> of the crawled HTML, not just the rendered HTML.

Monitor the Google crawlers documentation for the exact user-agent strings Googlebot sends. Your regex needs to match these strings precisely.

Watch out for

Overriding defaults without including them. Setting htmlLimitedBots replaces the entire default list. If you only add Googlebot without including Mediapartners-Google, AdsBot-Google, and the other defaults, those crawlers will start receiving streamed metadata instead of blocking metadata.

Live test vs. indexed page mismatch. The GSC live test runs a fresh render and shows the full DOM. The “View Crawled Page” view shows what Google actually stored during crawling. A page can pass the live test and still be indexed with missing metadata if the initial crawl hit a timeout or skipped the second rendering wave.