AI search cites size guides and support pages over product pages

Summary

An analysis of 25 leading ecommerce sites across five US subverticals found AI systems cite fit guides, return policies, tutorials, and support content far more than product or category pages.

Citation patterns vary sharply by vertical, and even category-leading retailers hold minority citation share for their own brand queries.

The data is directional, not causal, each AI platform uses different retrieval layers, but it suggests ecommerce teams over-indexed on PDP and feed optimization need to invest in the editorial and support content AI systems actually use as evidence sources.

What happened

A citation analysis by Aleyda Solis across 25 leading ecommerce sites in five US subverticals found that AI systems frequently cite pages that aren’t product detail pages (PDPs) or product listing pages (PLPs). The research, published May 12, used Semrush Enterprise AIO data to track which page types AI platforms cite when answering buyer queries in general marketplaces, beauty and skincare, fashion and apparel, consumer electronics, and sports and outdoors.

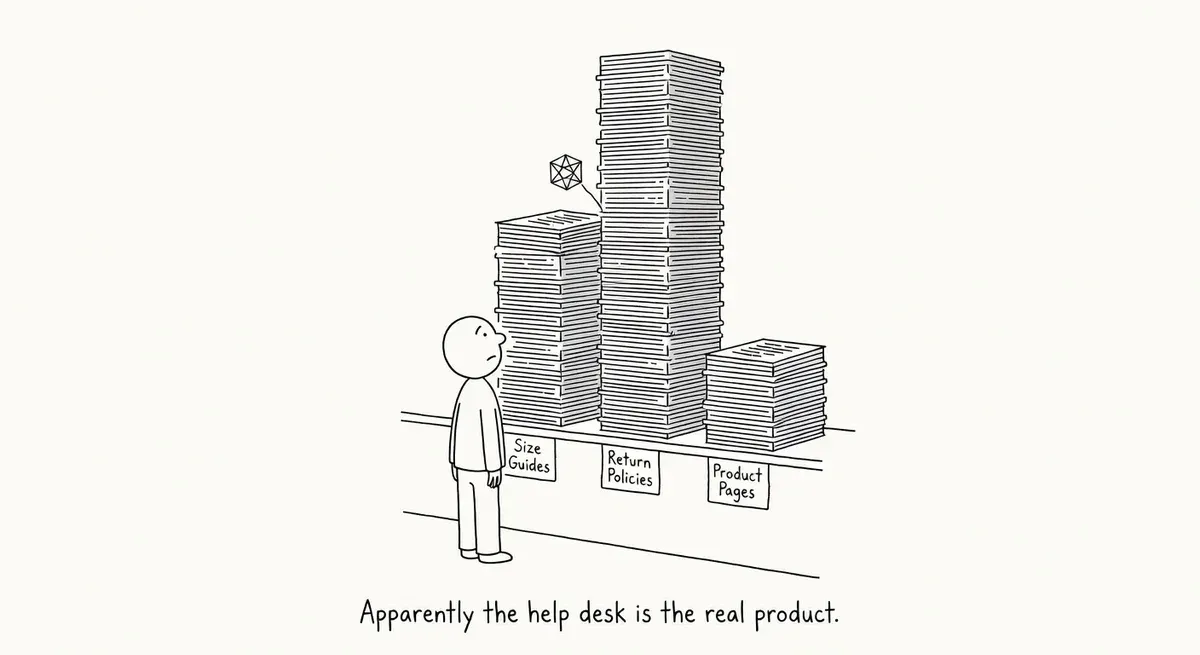

The most-cited ecommerce pages were often non-transactional types, including size and fit guides, support articles, return and shipping policies, buying guides, checklists, repair and recycling pages, store locators, tutorials, coupons, authentication pages, and educational content. These are pages most ecommerce teams treat as secondary SEO assets. In the citation data, they appear far more prominent than their traditional SEO priority would suggest.

Solis frames the core finding around buyer uncertainty. AI systems don’t just cite the page where the transaction happens. They cite whichever page, source, or third-party environment helps resolve the buyer’s uncertainty before, around, or after the purchase.

Why it matters

The conventional ecommerce AI search playbook focuses on PDPs, PLPs, product feeds, and structured data. Solis’s analysis suggests that playbook is incomplete. Product page schema and feed optimization serve multiple purposes, including rich results eligibility, Shopping integration, and entity disambiguation, but teams investing heavily in them may still be underinvesting in the editorial and support content that AI systems draw on as evidence sources.

A recent Ahrefs study reinforces this: JSON-LD schema showed no measurable citation uplift in AI search. Structured data and feeds matter for rich results and Shopping integration, but they don’t appear to drive AI citation visibility on their own.

The citation mix varies significantly by vertical. Beauty and skincare queries surface ingredient guides, safety content, and expert reviews. Fashion queries pull in fit guides and community discussions. Electronics queries cite warranty information, comparison guides, and repair documentation. A strategy that works for beauty will fail for general marketplaces, where peer-to-peer citations between competing sites are uniquely common.

Mid-market retailers feel the sharpest impact. Many have built entire SEO operations around category page and product feed optimization. If AI systems are citing the “evidence layer” around a purchase decision rather than the transactional page itself, those teams are putting budget into the wrong content types.

One finding is particularly sobering. Even category-leading retailers hold a minority share of citations about themselves (Pattern 6 in the analysis). Large brands with strong domain presence still don’t dominate the citation landscape for their own products. Third-party reviews, expert media, and community sources fill the gap.

The practical implication is that ecommerce brands need to think about what sources an AI system would need to confidently answer a buyer’s decision question. That reframes the optimization target from “which page should rank” to “which evidence does the AI need to assemble an answer.”

What to do

Audit your vertical’s citation mix before changing anything. The analysis shows citation patterns differ sharply across subverticals. Run buyer-intent queries through ChatGPT, Gemini, and Perplexity for your product categories and note which sources and page types get cited. Don’t apply a blanket “citation diversity” strategy across all your categories.

Map buyer uncertainty types to content gaps. Identify what creates doubt for buyers in your specific vertical. For fashion, that might be fit and sizing. For electronics, it might be warranty terms and comparison specs. For beauty, ingredient safety and expert endorsement. Build or improve content that directly resolves those uncertainties.

A large fashion retailer might discover that AI systems cite competitor fit guides and community forum discussions more than detailed product specs. The response isn’t more product schema. It’s building a fit-by-size resource center and making customer review discussions indexable.

Don’t abandon PDP and feed optimization. Solis is clear that product pages and structured data still matter. The argument is that they’re necessary but not sufficient. Broadening your content investment doesn’t mean gutting your existing technical foundation.

Prioritize ruthlessly given content production capacity. Large ecommerce sites can’t suddenly produce guides, fit content, and video transcripts across every category. Editorial bandwidth, subject-matter expertise, and CMS workflow throughput all cap how fast new sections can ship. Pick the 2–3 uncertainty types that matter most for your top revenue categories and build those first. Validate with AI search monitoring before scaling. Separately, if you do launch large new content sections, confirm they’re crawlable. Check that low-value URLs (faceted duplicates, thin tag pages) aren’t diluting crawl allocation. That’s the actual crawl-budget concern, distinct from publishing throughput.

Build a prompt testing framework by subvertical. Solis recommends testing representative prompts by category to see what gets cited. Track citations over time using Semrush’s AIO tools or manual spot checks across AI platforms. Note that Google Search Console does not surface AI search citation data, so dedicated monitoring outside of GSC is needed.

Watch out for

Citation patterns are not direct optimization levers. The research identifies what AI systems cite, not what causes them to cite it. Each platform (ChatGPT, Perplexity, Gemini) uses different retrieval pipelines. Citation patterns observed today may reflect training data composition or retrieval index bias rather than reproducible optimization levers. Treat the citation data as directional, not causal.

ROI measurement is genuinely hard. GSC doesn’t surface AI citation data. Measuring whether your new fit guide or ingredient transparency page actually drives AI search visibility requires third-party AI monitoring tools or manual testing across Gemini, ChatGPT, and Perplexity. Budget for measurement alongside content creation.

Minority citation share can invite false hope. Even large retailers hold minority citation share for their own brand queries. Smaller brands may optimize for the same citation patterns they see competitors earning and still not get selected as evidence sources. Established trust and expertise signals, brand prominence, and entity recognition likely still play a role in which sources AI systems choose to cite.