URL paths are semantic inputs for RAG pipelines, not just SEO

Summary

URL structures now function as semantic inputs for AI retrieval systems, not just SEO signals. RAG pipelines, LLMs, and zero-shot classifiers parse URL paths as meaningful text when deciding what to retrieve and cite.

Descriptive paths like /resources/seo/url-structure/ help AI systems understand content hierarchy and topic before reading the page body, while meaningless IDs force harder work and introduce ambiguity. Audit your URLs for semantic clarity and structure new content with topic-hierarchical paths that mirror query patterns.

What happened

URL structures are no longer just an SEO hygiene factor. They now function as semantic inputs for AI retrieval systems, according to a new analysis from Sophie Brannon at Search Engine Journal. The piece argues that RAG pipelines, web-connected LLMs, and zero-shot classification models all parse URL path segments as meaningful text strings when deciding what content to retrieve and cite.

The traditional SEO advice (short paths, hyphens, target keyword) still applies. But Brannon’s argument is that it’s incomplete for a world where ChatGPT, Perplexity, Claude, and Google’s AI Overviews are retrieving and synthesizing content differently from classic crawlers.

Why it matters

Traditional search engines can infer context from a page even when the URL is a meaningless ID string. AI retrieval systems are less forgiving. Brannon outlines three mechanisms where URL structure matters to LLMs:

- RAG chunking and retrieval. Developer-built RAG systems crawl URLs, convert page content into searchable chunks, and store them as vector embeddings. The URL path is part of the text that gets processed. A descriptive path like

/resources/seo/url-structure-ai-retrieval/gives the retrieval layer explicit hierarchy and topic signals before it even reads the page body. - URL context grounding (Gemini-specific). Google’s Gemini uses a technique called URL context grounding to pull direct information from individual URLs without full RAG processing. The goal is to improve factual accuracy by analyzing content and data at specific URLs. Descriptive paths help Gemini understand what a URL covers before combining information from multiple sources.

- Zero-shot classification. Models can categorize a webpage’s purpose without task-specific training data by analyzing semantic cues in the URL string itself. The model maps URL patterns to predefined categories using cosine similarity or prompt-based reasoning. A URL that communicates nothing forces the model to work harder and introduces ambiguity in categorization.

Beyond retrieval mechanics, there’s a user-facing reason too. When an AI system cites a source, the URL is often visible alongside the excerpt. A clean, descriptive path builds trust in the same way it does in a SERP snippet. A path like /p?id-4821 does not.

The practical implication is that URL path segments now serve as a secondary content layer. They communicate hierarchy, topic, and specificity independently of the page title, H1, or other metadata.

What to do

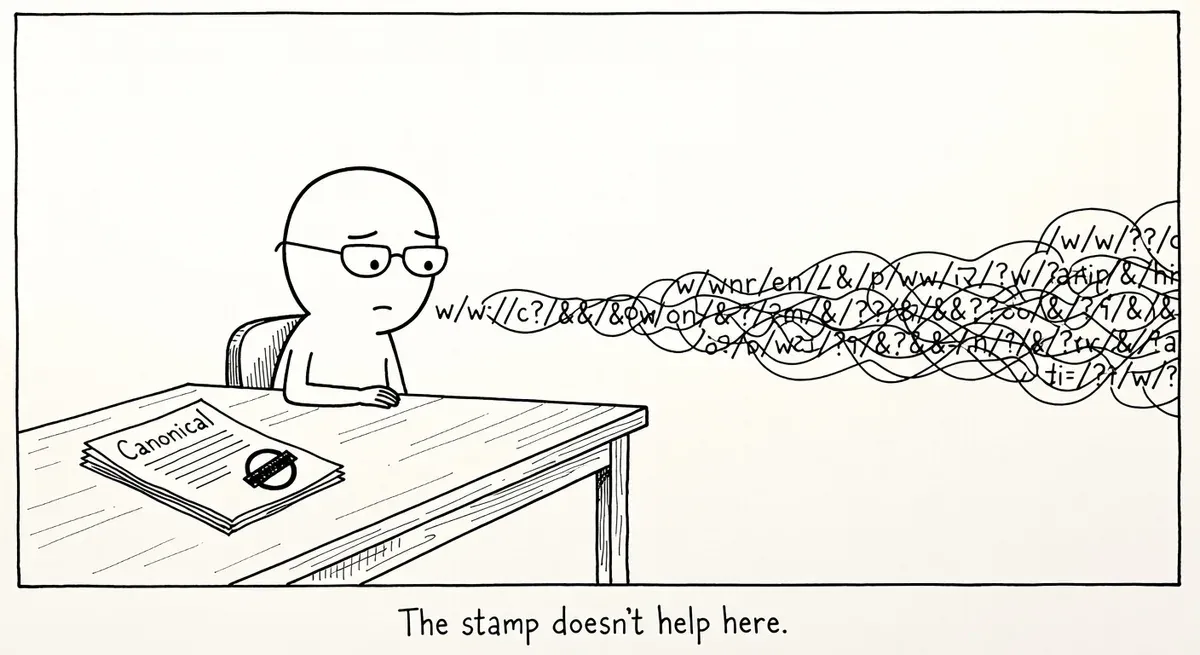

Audit existing URL structures for semantic clarity. Check whether your most important pages have paths that communicate topic and hierarchy through readable words. A path like /resources/seo/url-structure-ai-retrieval/ tells both humans and machines what the page covers. A path like /blog/post-4821 does not.

Use folder depth to signal content hierarchy. Brannon frames URL hierarchy as a way to reinforce topical authority. If your domain covers SEO, structure your paths so that category and subcategory relationships are visible: /guides/technical-seo/crawl-budget/ rather than /guides/crawl-budget/. RAG systems can use folder nesting to infer content provenance.

Prioritize question-based and long-tail content paths. AI systems handling specific queries look for precise matches. A URL path that mirrors the query structure (e.g., /faq/how-to-set-canonical-tags/) gives the retrieval system an additional relevance signal before it processes the page content.

Don’t break existing URLs to fix this. If your current URLs rank well and have backlinks, restructuring them creates redirect chains and risks losing link equity. Apply these principles to new content and new sections. For existing content, the on-page signals (title, headings, body) still carry more weight than the path alone.

Check your AI-facing URLs specifically. Look at which URLs are being crawled by AI bots (GPTBot, ClaudeBot, PerplexityBot) in your server logs. If those bots are hitting your most opaque URL patterns, those pages are the highest-priority candidates for path improvements on future versions or redesigns.

Watch out for

Over-nesting URL paths. Adding five folder levels for semantic clarity backfires. Deeply nested paths create crawl friction and dilute link equity. Two to three meaningful path segments is the sweet spot.

Assuming URL structure alone drives AI citation. URL paths are one signal among many. Page content quality, structured data, and domain authority still matter more. Treating URL restructuring as a silver bullet for AI visibility will disappoint.