Ahrefs study finds JSON-LD schema does not boost AI citations

Summary

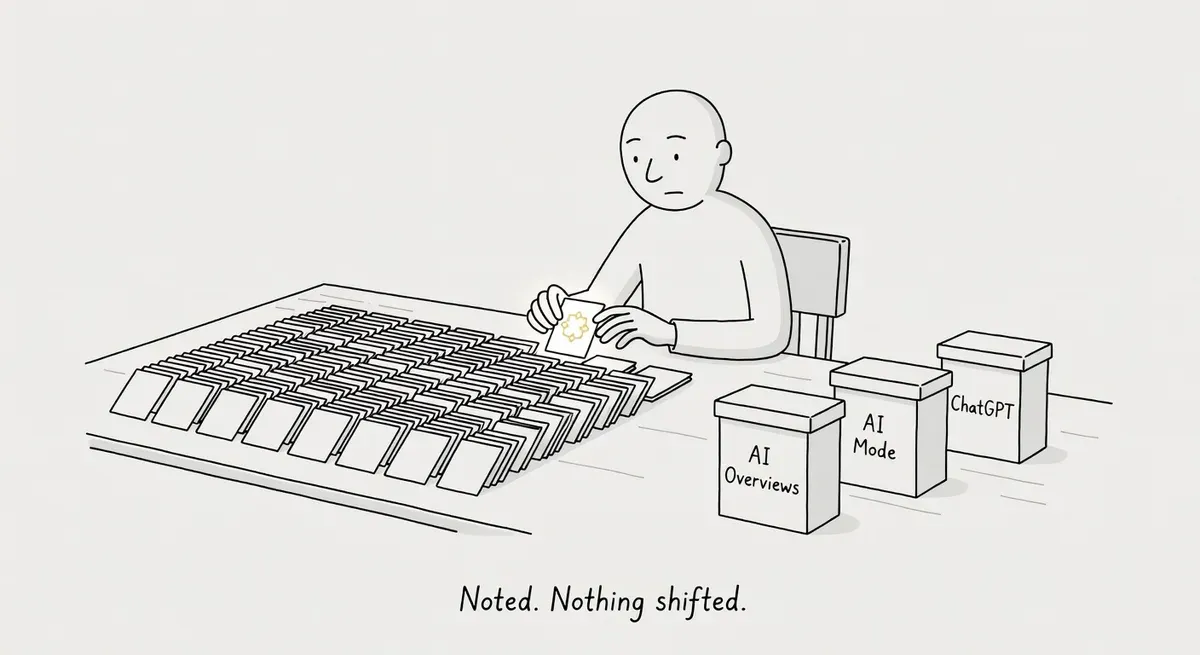

An Ahrefs study tracking 1,885 pages that added JSON-LD schema found no citation uplift across Google AI Overviews, AI Mode, or ChatGPT.

Schema still earns traditional rich results, but the data does not support investing in it for AI citation gains.

The study tested retrieval agents that pick sources to cite. It did not test action-oriented agents that land on your page and need to parse it, where structured data serves as a machine-readable interface rather than a ranking signal.

What happened

Adding JSON-LD schema markup to web pages did not increase citations in AI search results, according to an Ahrefs study published May 11. The study quickly gained traction in the r/TechSEO community. The study tracked 1,885 pages that added JSON-LD schema between August 2025 and March 2026, matched them against 4,000 control pages, and measured citation changes across Google AI Overviews, AI Mode, and ChatGPT.

The results, drawn from a matched difference-in-differences (DiD) test (a before-and-after comparison that isolates the effect of one change by comparing treated pages against a control group):

- Google AI Overviews: −4.6% citation change. Small but statistically significant decline relative to controls. Both groups were declining, but treated pages fell slightly faster.

- Google AI Mode: +2.4% citation change. Statistically indistinguishable from zero.

- ChatGPT: +2.2% citation change. Statistically indistinguishable from zero.

The study’s conclusion: “We can’t tell whether the schema did a tiny bit of good or nothing at all.”

Why it matters

A common assumption in the AI search optimization space is that structured data helps AI systems find and cite your content. The Ahrefs data challenges that assumption directly. Across nearly 5,900 total pages and three major AI citation sources, schema markup produced no reliable uplift.

The distinction between schema’s role in traditional search and AI search is the key takeaway here. Google’s own documentation on structured data frames its value around rich results and helping Google “understand what a page is about.” None of that documentation promises AI citation benefits.

Schema still earns rich snippets, star ratings on supported parent types, breadcrumbs, and product details in traditional SERPs.

Teams that retrofitted schema across large content sets specifically to win AI citations now face a poor return on that investment. The −4.6% AI Overviews result is particularly notable. While the study authors caution that both groups were declining together, treated pages declined faster. At minimum, schema did not protect against citation loss.

The finding fits what we know about how LLMs process pages. When transformer-based models are trained on web crawls or fed full HTML during retrieval, they process JSON-LD <script> blocks along with the rest of the page content. Schema is not literally invisible to them, though retrieval pipelines may strip or deprioritize script tags before the model sees the content.

Whether models weight that markup vocabulary the way traditional crawlers do is a separate question. The Ahrefs citation data suggests they do not, at least not enough to move the needle.

Our earlier piece on why schema markup does not influence LLM parsing argues the same conclusion from the mechanism side. The Ahrefs study is the matching empirical result. Schema.org vocabulary still gives machines a structured way to describe entities. AI retrieval systems appear to weight other signals (content quality, topical authority, source reputation) far more heavily when choosing which pages to cite.

But “AI” is not one thing, and the study tested only one slice of it. Ahrefs measured retrieval agents: systems that search the web, build an answer, and optionally cite sources. Other AI systems interact with pages differently.

Training crawlers ingest HTML in bulk to build model knowledge. Action-oriented agents (browser assistants, booking systems, tool-using AI) land on a page and need to understand its structure to complete a task.

Google’s agentic booking already skips search results entirely, querying page data directly. For those agents, structured data is not a ranking signal. It is a machine-readable interface.

The question shifts from “does schema help me get cited?” to “can an agent on my page understand what it offers?” That is a different question from the one Ahrefs answered, and likely more consequential as agent runtimes take over how AI reads the web.

One unanswered question from the study: does schema type matter? HowTo markup may behave differently than Product or NewsArticle in AI citation contexts. The study tracked pages that “added JSON-LD schema” but did not break results down by schema vocabulary type. Practitioners working with specific schema types should not assume uniform behavior.

What to do

Do not remove existing schema. The study says schema didn’t help AI citations. It does not say schema hurts traditional search performance. Rich snippets, merchant listing eligibility, and breadcrumb placement all still depend on structured data.

Stop justifying new schema projects with AI citation gains. If the business case for a schema implementation rests on “AI Overviews will cite us more,” the Ahrefs data does not support that claim. Reframe schema ROI around traditional SERP features instead.

Audit your AI search strategy separately from your schema strategy. AI citation visibility appears to depend on factors other than markup. Content depth, topical authority, and source reputation are more likely drivers. If you are tracking AI citations in Google AI Overviews or ChatGPT, test those variables independently rather than bundling them with schema work.

Keep implementing schema where it has proven value. Product schema drives merchant listings. Star ratings require review markup nested within a supported parent type such as Product or Recipe. Standalone review snippets are no longer broadly supported by Google for most page types.

NewsArticle, Article, and BlogPosting schema are all recognized types for Google’s news-related features including Top Stories, though structured data alone does not gate eligibility. These are documented, measurable outcomes that the Ahrefs study does not contradict.

Invest in machine-readable page structure for the agents that actually visit your site. The agents Ahrefs tested never land on your page. They pick citations from an index. The agents that do visit (browser assistants, booking flows, tool-using AI) need to parse your page to act on it.

Semantic HTML, stable DOM structure, accessible names on interactive elements, and machine-context protocols like WebMCP give them what they need. Google now tells developers to build for AI agents as a distinct audience. AI agents read the accessibility tree, not your rendered pixels. This is where structured data investment has a direct, testable payoff that the Ahrefs study does not address.

Watch out for

Assuming all schema types behave the same. The study measured pages that added JSON-LD broadly. It did not publish per-type breakdowns. HowTo, Recipe, or Product schema could behave differently in AI citation contexts. Treat the aggregate finding as directional, not type-specific.

Confusing “no citation lift” with “AI doesn’t read schema.” When LLMs are trained on web data or fed full HTML, JSON-LD script blocks are part of what they process, though some retrieval pipelines strip script tags before the model sees the content. Either way, the Ahrefs finding is that whatever these systems do with schema markup, it did not translate into more citations.

Whether schema is under-weighted or simply not used as a citation-selection signal remains unconfirmed. See our companion piece on how LLMs parse page content for the mechanism-side argument.

Applying the citation finding to all AI use cases. The study tested retrieval agents that pick sources from an index. It did not test agents that land on your page and try to use it. Schema and structured markup may matter far more when the AI is on your page than when it is deciding whether to link to it. Do not use this study to deprioritize machine-readability work for agentic use cases.