Google's quality sampling kills scaled content, not AI detection

Summary

Google's quality sampling drops scaled content batches, not because of AI detection but because representative URL samples fail quality checks after the freshness boost fades. Quality thresholds shift over time as better content gets published, so sites that publish large batches without strong editorial backing get culled at scale. Audit crawl patterns by URL group and focus on post-publication signals like internal linking and updates rather than production volume.

What happened

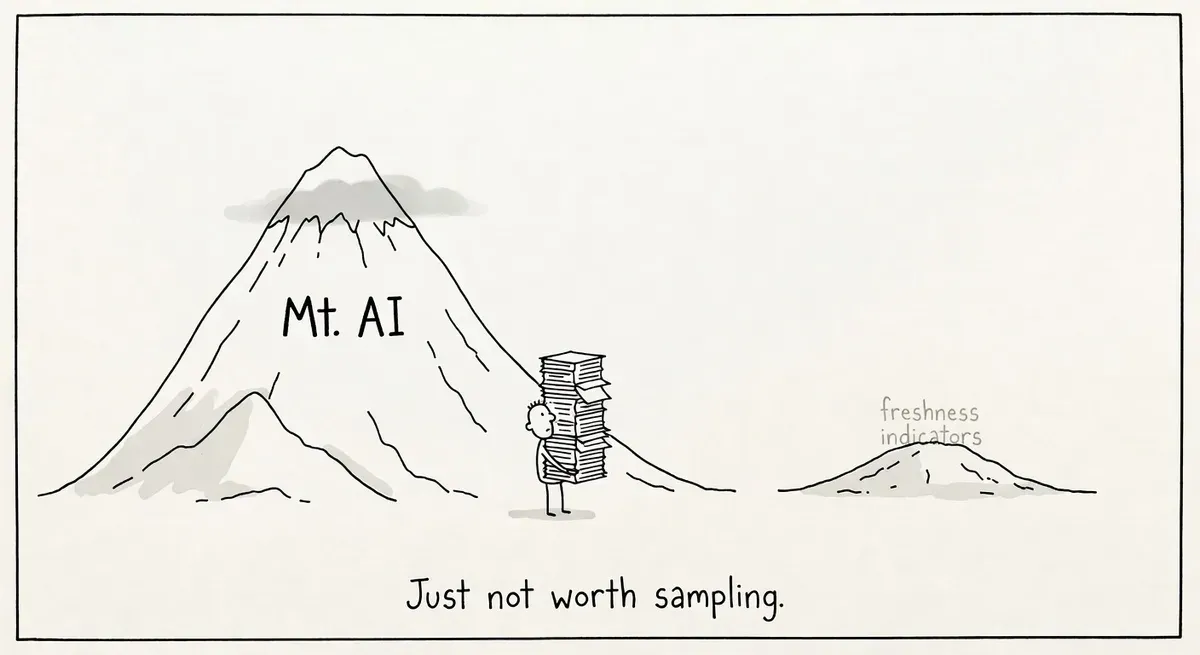

The viral “Mt. AI” traffic pattern, where scaled AI content surges then collapses, keeps showing up in LinkedIn case studies. Dan Taylor argues in Search Engine Journal that the collapse has nothing to do with AI detection. The real mechanism is Google’s quality sampling of new URL batches combined with its shifting quality threshold.

Taylor points to an ongoing brand case study shared by Martin Sean Fennon on LinkedIn. The content was scaled through AI and showed the familiar pattern: initial traffic spike followed by steep decline. Taylor then shows the same pattern occurring with non-AI content from a brand launched in January 2021, well before the current wave of AI-generated pages.

Why it matters

The distinction between “Google penalizes AI content” and “Google samples new URLs and drops the ones that fail quality checks” changes how practitioners should respond to traffic losses.

When a site publishes a large batch of new URLs, Google increases crawl resources to process them. The URLs receive a freshness boost during initial processing. Google then selects a representative sample of those new URLs, sometimes based on URL pattern such as a subfolder, and monitors how users engage with them.

If the sampled URLs perform poorly after the freshness boost fades, the remaining scaled content struggles to gain traction. Google pulls back crawl resources and drops pages from the index. The content never survived on its own merits.

Taylor notes that the quality threshold is not static. It shifts over time as better content gets published across the web. Adam Gent, cited in the article, has noted this moving-target dynamic. The threshold also varies by topic, since not all queries reward freshness equally.

The practical implication is that AI is just an amplifier. Sites that scaled content through freelance farms, agency content mills, or template-based generation saw the same pattern years ago. AI makes it easier to produce volume, which makes it easier to trip Google’s quality sampling at scale.

Google recently described the goal as “non-commodity content” at a Toronto event. That framing fits with the sampling model: if a batch of URLs looks interchangeable with what already exists in the index, the sample will underperform, and Google will retract resources from the whole batch.

What to do

Audit recent scaled content against the sample model. If you published a large batch of URLs in a subfolder or content hub, check which ones Google is still crawling. Use server logs or Screaming Frog’s log analyzer to see if crawl frequency dropped after an initial spike. A decline in crawl frequency across the batch suggests Google sampled and found the content wanting.

Check indexation rates by URL pattern. Group your scaled content by subfolder or template type in Google Search Console. If indexation rates are low or declining for a specific pattern, Google may be applying its sample-based quality judgment to the entire group.

Stop treating volume as the metric. The article argues that production scale should give way to quality maintenance at scale. Before publishing the next 500 pages, pick 20 from your last batch and ask whether they would hold up against the top 3 results for their target query. If not, the sample model predicts the batch will fail.

Invest in post-publication quality signals. Internal linking, distribution, and editorial updates matter after the freshness boost window closes. Pages that receive no internal links and no updates after publication are the ones most likely to fail Google’s quality sampling.

Don’t blame AI tooling when the content strategy is the problem. If your keyword targeting is thin, your editing is minimal, and your internal linking is absent, swapping AI for human writers won’t fix the pattern. The sampling mechanism is content-quality agnostic.

Watch out for

Subfolder-level penalties from bad samples. If Google evaluates a representative sample from a URL pattern and the sample fails, the entire subfolder or pattern may lose crawl priority. A few poor pages can drag down hundreds of decent ones grouped under the same path.

Misreading the freshness boost as validation. Early traffic to new content does not mean Google has judged it high quality. The freshness boost reflects initial processing of new URLs, not a quality endorsement. Give scaled content time to settle before drawing conclusions about performance.