Blocking CSS and JS in robots.txt breaks indexing, not saves

Summary

Blocking CSS and JS in robots.txt breaks Google's ability to render pages correctly, not saves crawl budget. Googlebot needs these resources to understand content and layout; blocking them leaves Google with incomplete page versions. If crawl budget on resources is high, fix cache headers, consolidate files, or reduce resource counts instead.

What happened

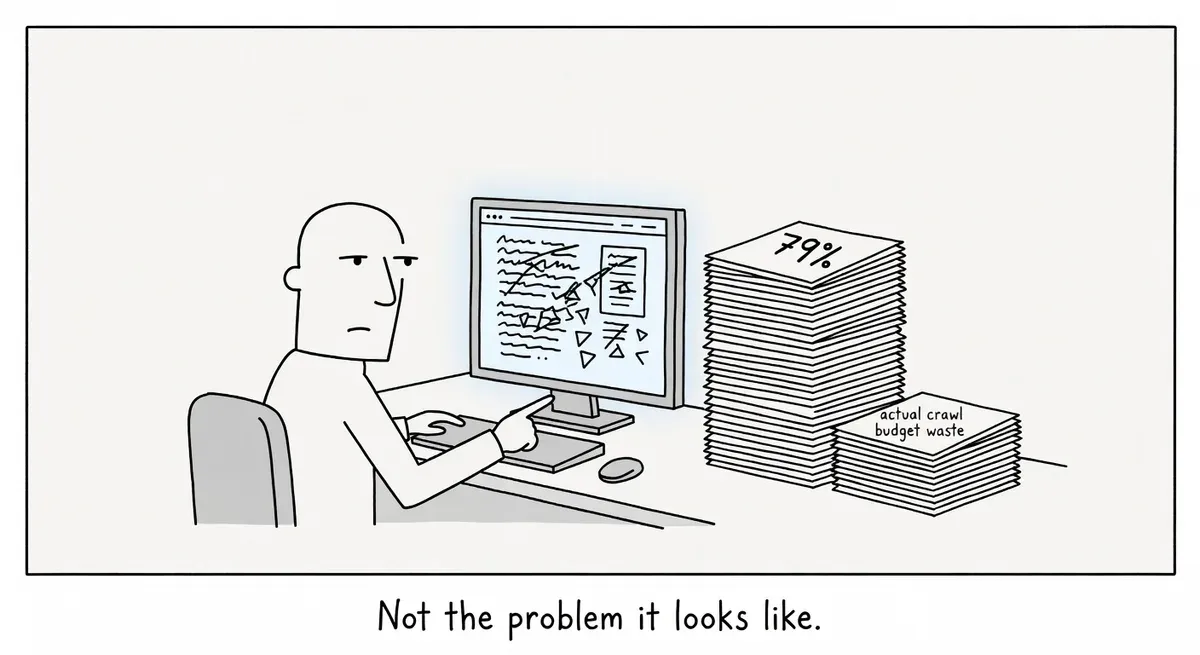

A practitioner posted in r/TechSEO asking whether blocking CSS and JS files in robots.txt would help with crawl budget. The poster reported that 79% of their crawl budget was going to page resource loads, mostly CSS and JS files. The community response was unanimous: do not block these resources.

The top-voted reply, from user mjmilian with 18 points, was blunt: “No, because Google, and other bots, won’t be able to render your pages correctly.” Another commenter flagged by the subreddit as someone who “knows how the renderer works” endorsed the warning.

Why it matters

Googlebot renders pages. It executes JavaScript and applies CSS to understand page content and layout. When you block those resources in robots.txt, Googlebot can’t complete the rendering step. The result is that Google sees a broken or incomplete version of your page.

Google’s robots.txt documentation confirms that crawlers download and parse the robots.txt file before crawling any part of a site. Disallow rules for CSS and JS paths stop Googlebot from fetching those files entirely, not just for the initial crawl but for every rendering attempt.

The original poster’s concern about crawl budget is understandable. Seeing 79% of crawl activity spent on resources feels wasteful. But those resource fetches are a normal part of how Googlebot processes pages. Blocking them doesn’t free up crawl budget for content pages. It degrades Googlebot’s ability to understand any page that depends on those resources.

One commenter, mathayles, made a useful distinction about AI crawlers. Bots like ClaudeBot only ingest raw HTML and don’t execute JavaScript at all. Blocking JS has no effect on those crawlers because they never attempt to run it. The rendering concern is specific to Googlebot and a few others like Apple’s crawler.

A better approach than blocking resources is to examine why Googlebot needs to fetch them so frequently. High resource fetch rates can indicate issues like uncacheable assets, excessive file counts, or poor use of cache headers.

What to do

Don’t block CSS or JS in robots.txt. If you’re seeing high resource crawl rates, the fix is not to hide resources from Googlebot.

Check your resource caching. If Googlebot is re-fetching the same CSS and JS files repeatedly, your cache headers may be too short or missing. Set appropriate Cache-Control headers on static assets so Googlebot doesn’t need to re-download them on every visit.

Audit your resource count. A page that loads dozens of CSS and JS files forces Googlebot to make many requests per page render. Consolidating or reducing the number of resource files can lower the crawl overhead per page.

Review the crawl stats report in Google Search Console. The report breaks down crawl requests by file type. Look at whether resource fetches are spread across many unique URLs or concentrated on a few files being re-fetched. The pattern tells you whether the problem is too many files or poor caching.

Consider if your content is JS-dependent. If critical content or links are rendered via JavaScript, blocking JS doesn’t just hurt rendering. It makes that content invisible to Google entirely. Server-side rendering or static HTML for key content reduces your dependency on Googlebot’s renderer.

Watch out for

Legacy robots.txt rules you forgot about. Some older CMS setups or security-focused configurations ship with blanket disallow rules for /wp-includes/, /assets/, or /static/ directories. These can silently block CSS and JS without anyone realizing. Audit your robots.txt for rules that match resource paths.

Confusing AI crawler behavior with Googlebot behavior. As one commenter noted, AI crawlers like ClaudeBot don’t render pages at all. Rules that affect Googlebot’s rendering have zero impact on those bots. Don’t assume that what works for one crawler type applies to another.