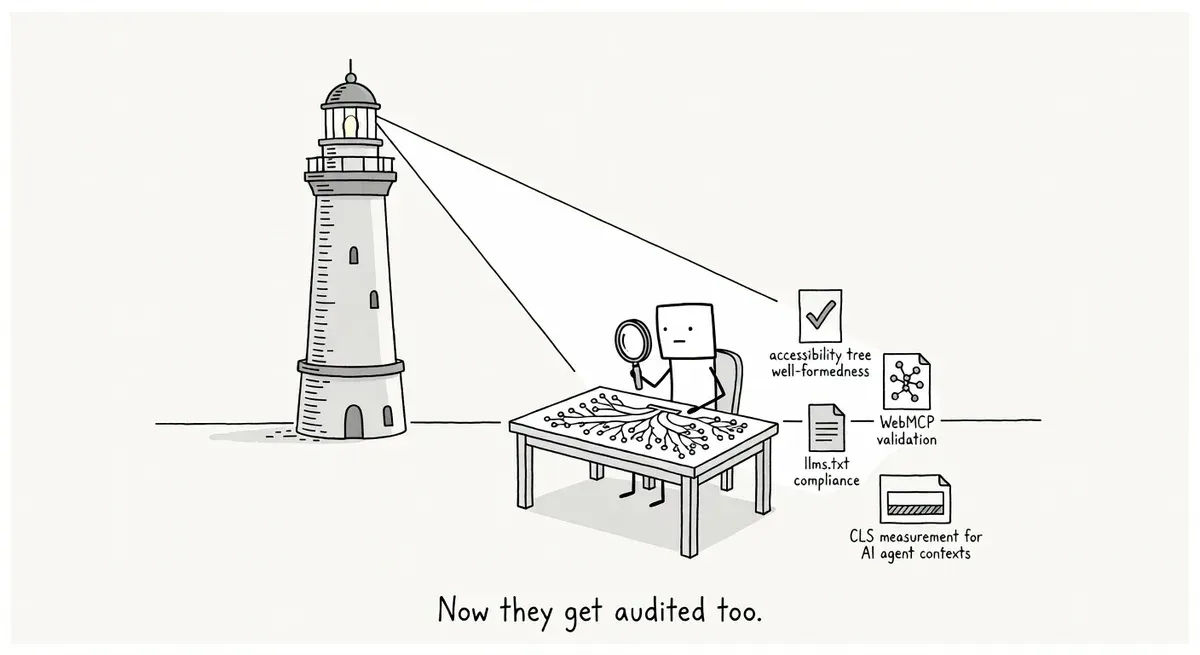

Lighthouse 13.3 adds agentic browsing audits for AI agents

Summary

Lighthouse 13.3 ships an 'Agentic Browsing' audit category (still under development) checking accessibility tree quality, WebMCP form annotations, llms.txt compliance, and CLS in agent contexts. The accessibility tree and WebMCP checks are most likely to surface new failures, especially on JS-heavy sites where hydration timing affects what agents see. Run the CLI locally to audit now since PageSpeed Insights hasn't updated yet.

What happened

Lighthouse 13.3 shipped this week with a new “Agentic Browsing” category that audits how well websites work with AI agents. The category is still marked “under development,” but it runs checks grouped into four areas:

- Accessibility tree well-formedness. Verifies that the page’s accessibility tree is properly structured. The accessibility tree is a browser-native representation of the DOM that exposes roles, names, and states to assistive technologies and AI agents.

- WebMCP validation. Checks whether HTML forms are annotated with WebMCP metadata, a declarative API that lets websites expose specific commands for agents to use within the visitor’s browser session.

- llms.txt compliance. Looks for an llms.txt file and flags it if the file is missing an H1 header, is too short, or contains no links.

- Layout shift detection. Surfaces existing CLS data in the agentic context, since agents taking screenshots can be confused by shifting content.

The category draws partly from existing Lighthouse data (accessibility and CLS) and partly from new audits (WebMCP and llms.txt). Google also published a guide on building agent-friendly websites, which we covered separately. Several of these audits overlap with that guide’s recommendations.

You won’t fail the category just because you haven’t added AI-specific features. The source notes that example.com scores a green 2/2.

Why it matters

The agentic browsing category creates a diagnostic layer alongside traditional SEO and accessibility audits. Sites scoring well on existing checks can still fail here if their semantic structure doesn’t meet stricter agent expectations.

Accessibility tree quality is where human-focused and agent-focused audits diverge most. Using aria-label on a div without an explicit role is an ARIA spec violation that screen readers silently ignore. The agent audit catches these. A clean WCAG AA report is not equivalent to a well-formed accessibility tree.

JavaScript-heavy sites face particular risk. Google’s guide notes that agents interact with pages through screenshots, HTML, and the accessibility tree. On SPA frameworks like React or Next.js, forms render via hydration. If the accessibility tree snapshot captures pre-hydration state, form annotations appear missing.

WebMCP is the most unfamiliar check. Unlike general MCP servers, WebMCP targets front-end interactions within the visitor’s browser session. The DebugBear writeup mentions a programmatic navigator.modelContext.registerTool surface alongside a declarative form-annotation pattern. WebMCP is not a ratified W3C or WHATWG standard and ships in no browser today. Treat it as a moving target. The audit checks whether forms carry annotations matching the expected schema and surfaces any registered tools. ARIA attributes are not a substitute for WebMCP metadata.

The source explicitly notes llms.txt “is not widely used by AI tools currently.” Be aware of the spec, but don’t over-invest in a standard with minimal agent adoption today. Semrush launched similar agent-readiness audits in its Site Audit tool, suggesting this class of checks is becoming standard across the industry.

What to do

Run Lighthouse 13.3 locally. PageSpeed Insights and Chrome DevTools still run an older Lighthouse version. DebugBear expects them to update in the coming months. Running the CLI now lets you audit immediately:

npm install -g lighthouse@latest

lighthouse --view https://your-site.com/Check the agentic browsing category in the report output.

Audit your accessibility tree separately from WCAG compliance. The ARIA Authoring Practices Guide defines what “well-formed” means. Check role attributes on container elements and nesting hierarchies. Sites using Shadow DOM or web components should verify their accessibility trees don’t fragment across boundaries.

Review WebMCP form annotations if you have interactive forms. The spec is experimental and subject to change, so check the latest version before implementing. If your site has agent-facing forms (search, filters, checkout), annotate those first. Lighthouse checks both form coverage and schema validity separately.

Don’t rush to create an llms.txt file just for the audit score. If you have one, make sure it includes an H1 header, meaningful content, and links. Those are the three things the audit checks. If you don’t have one, wait for evidence that AI tools actually parse it.

Check CLS in the agentic context. Agents take screenshots and read the accessibility tree at page load. If content shifts after that snapshot, the agent works from stale layout data. Sites passing the 0.1 CLS threshold for human visitors may still trip agents that don’t wait for visual stability.

Watch out for

Hydration timing on JS frameworks. Test with and without JavaScript to see what the pre-hydration accessibility tree looks like. Forms rendered via client-side hydration may appear missing in the snapshot.

WebMCP and ARIA are separate concerns. Passing accessibility audits does not mean passing agentic browsing audits. WebMCP metadata uses its own schema independent of ARIA labels.

The “under development” label matters. Don’t refactor heavily based on current audit criteria. Monitor Lighthouse documentation for spec changes.