Agent runtimes, not models, now control how AI reads your site

Summary

Cloudflare and OpenAI shipped agent runtime SDKs in mid-April, establishing runtimes as the layer that fetches, parses, and interprets web pages for AI agents instead of models doing it directly. Agent runtimes operate under stricter sandboxes than Googlebot, blocking dynamic imports and certain JavaScript patterns, so sites passing Google crawl tests may still fail for agents. Test your JavaScript execution in sandboxed environments and serve JSON-LD server-side rather than via JavaScript injection.

What happened

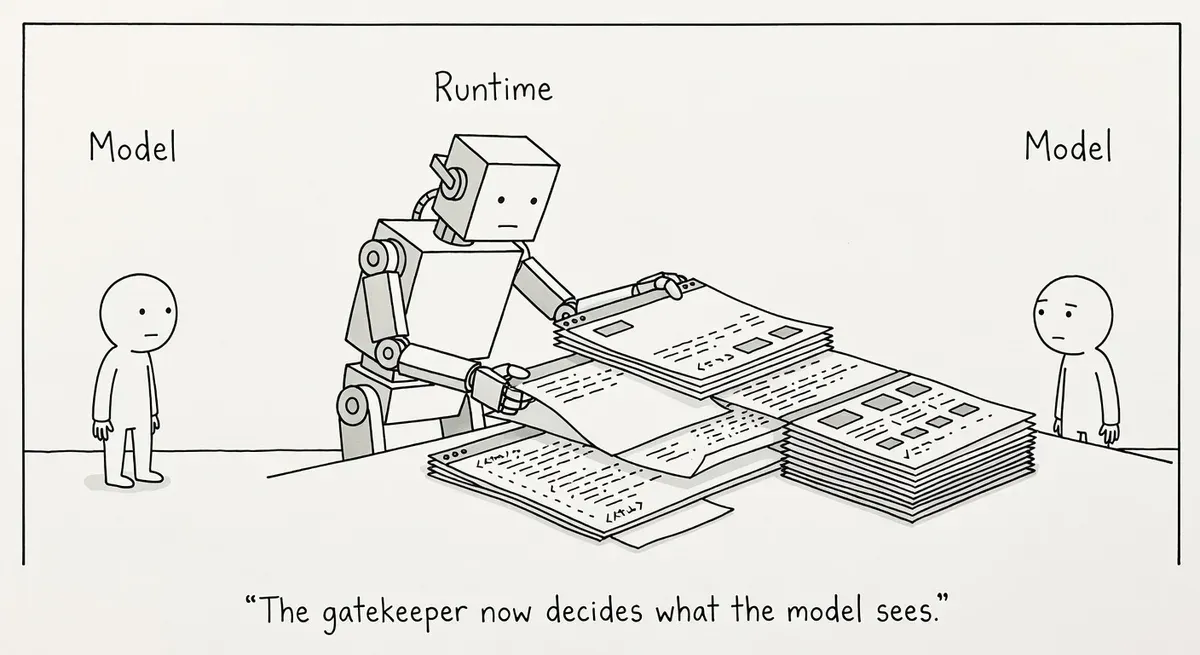

Cloudflare and OpenAI both shipped agent runtime SDKs in mid-April. A Search Engine Journal analysis by Slobodan Manic argues this marks a structural shift. The runtime, not the model, now fetches your page, parses your HTML, executes (or skips) your JavaScript, and resolves your structured data.

Cloudflare’s April 15 release, called Project Think, includes durable execution with crash recovery, sub-agents, persistent sessions, and sandboxed code execution. The next day, April 16, the Cloudflare Workers AI platform added a vendor-agnostic inference layer and vector index for agent retrieval.

Around the same time, OpenAI shipped an updated Agents SDK with native sandbox execution. Two of the web’s largest infrastructure operators answered the same question within days: how does a long-running AI agent actually run in production?

Why it matters

The model no longer reads your website directly in agent architectures. The runtime fetches your page, parses it, and hands the result to the model. By the time any model sees your content, it sees the runtime’s interpretation of it.

This is an emerging pattern rather than a settled universal reality. Many AI products still use proprietary or model-specific crawlers. Perplexity, for example, operates its own crawling infrastructure independent of these runtime SDKs.

But the Cloudflare and OpenAI releases signal a clear direction. Optimizing narrowly for individual models misses the point if the runtime upstream can’t parse your site.

JavaScript-heavy sites face the sharpest risk. Sandboxed execution in agent runtimes may block dynamic imports, certain fetch patterns, or browser APIs. These failures can occur even on pages that Googlebot renders correctly.

Passing Google’s crawl tests only confirms Googlebot compatibility. Agent runtimes operate under different and often more restrictive sandboxes. A Next.js site with lazy-loaded product attributes could render perfectly for humans while agents see incomplete data. Those failures won’t appear in traditional SEO audits.

Structured data practitioners face a related problem. JSON-LD that depends on JavaScript injection may not resolve at all in a runtime that skips client-side execution. Server-side JSON-LD is the recommended practice regardless of agent considerations.

Multi-threaded agent sessions create infrastructure concerns too. Multiple concurrent requests from a single agent task could trigger rate limiting or bot detection systems built for single-request crawlers. Server logs may not clearly distinguish agent runtime traffic from regular bot traffic.

What to do

Test your critical pages without JavaScript. Disable JavaScript and check whether your most important content, structured data, and product attributes still appear in the raw HTML. If key information only exists after client-side rendering, server-side render it or pre-render it as static HTML.

Audit your JSON-LD independently from your rendered page. Use View Page Source or curl to check whether structured data exists in the raw server response before any JavaScript executes. The Rich Results Test uses a Googlebot-equivalent rendering pipeline, so it won’t catch JS-dependency issues relevant to agent runtimes. If your structured data is injected by JavaScript, add it to the initial server response instead.

Check your authentication flow for multi-call sessions. Auth flows built for one-shot human logins will break when an agent needs to hold state across a multi-request task. Verify that agents acting on a user’s behalf can maintain a session.

Review your rate limiting and bot detection rules. Agent runtimes may send multiple concurrent requests as part of a single user task. If your WAF treats rapid sequential requests as abuse, legitimate agent traffic gets blocked silently. Log agent runtime user-agent strings separately.

Build for accessibility first. Semantic HTML, proper heading hierarchy, and ARIA landmarks are accessibility best practices that also make sites parseable by agent runtimes. A site that works for screen readers is already well-positioned for AI browsing agents that navigate via the accessibility tree, such as those using Playwright MCP.

Consider structured agent access via WebMCP. The Web Model Context Protocol is an emerging pattern (not yet a ratified standard) that lets pages register tools and structured section access for AI agents via navigator.modelContext. Rather than hoping every runtime correctly parses your HTML, WebMCP gives agents a structured interface to your content.

Serve machine-readable responses at key endpoints. If your pages only make sense inside a full browser session with CSS layout, agents will struggle. Non-semantic HTML breaks agent interaction across multiple access patterns, as covered in our analysis of Google’s developer guidance. The minimum is well-formed semantic HTML with server-rendered JSON-LD.

Watch out for

Stale state after crash recovery. Durable runtimes like Cloudflare’s can pause mid-task and resume later using previously fetched content. Pages with time-sensitive data (pricing, inventory, event availability) are most exposed. Cache-Control headers govern CDN and browser caches, not what a runtime persists internally. Design fallbacks like re-validation steps, short-lived tokens, and server-side timestamps.

Invisible failures across runtimes. A site that works for Googlebot may fail silently for Cloudflare’s sandbox or OpenAI’s runtime. No single monitoring tool covers all agent runtimes yet. Manual testing against raw HTML output is the most reliable check.