Semrush launches AI agent readiness audits for technical SEO

Summary

Semrush Site Audit now scores your site's readiness for AI agents, flagging blocked crawlers and missing llms.txt files.

The bigger issue it surfaces: if your key pages render pricing or product data client-side, AI agents see an empty template. No robots.txt problem, just invisible content.

Check your critical pages with curl. If the data isn't in the raw HTML response, agents can't see it either.

What happened

Semrush has released a set of features designed to help sites prepare for AI agent interactions. The company published a guide on agentic search optimization on May 1, walking through how its existing tools can audit a site’s readiness for AI-driven browsing and task completion.

The core addition is an “AI Search Health” score within Semrush’s Site Audit tool. After running a crawl, users can review a score reflecting how accessible and structured their pages are for AI crawlers. A “Blocked from AI Search” widget shows which AI crawlers are blocked via robots.txt and which pages are affected.

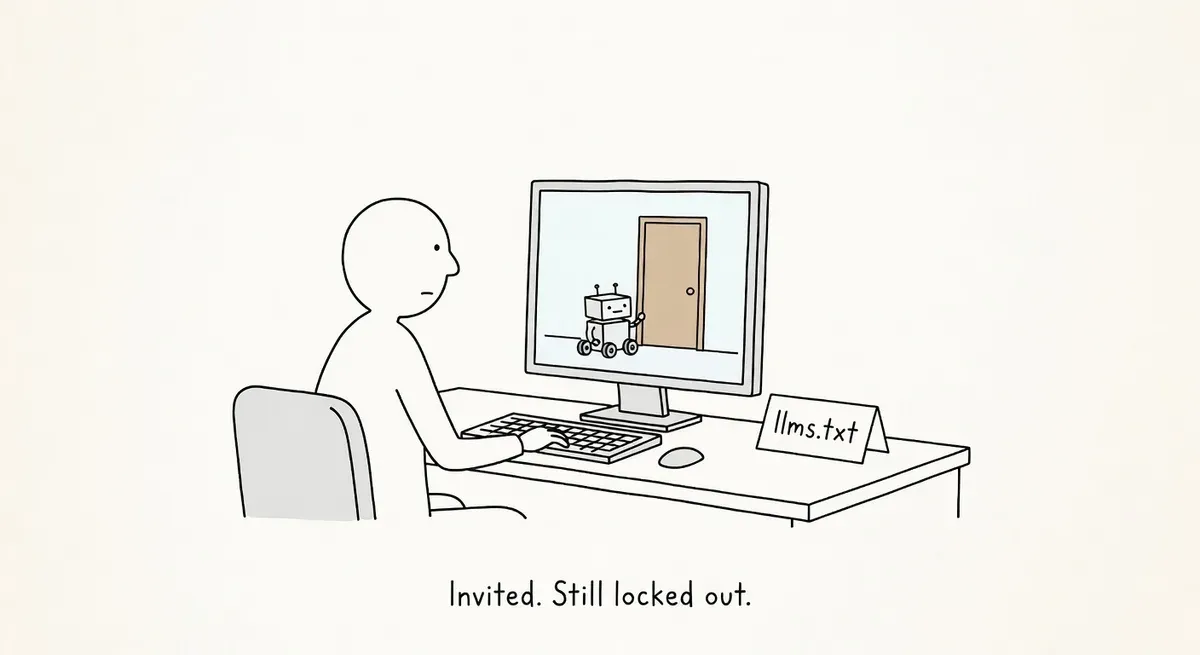

The Site Audit’s Issues tab now includes an “AI Search” filter that flags problems like missing anchor text on links, pages with only one internal link, content needing optimization, and a missing llms.txt file.

Semrush also recommends using its Log File Analyzer to check whether AI bots actually crawl your site. Users can filter server logs for user agents like GPTBot, ChatGPT-User, OAI-SearchBot, and ClaudeBot to see which pages get bot activity, what status codes bots encounter, and whether certain pages or file types are being skipped.

Why it matters

The concept Semrush is calling “agentic readiness” goes beyond AI Overview visibility. It addresses whether an AI agent can land on your site, understand the content, and complete a task like retrieving pricing or submitting a form. The distinction matters because agent-driven workflows penalize sites differently than traditional search does.

A page that ranks fine in Google but hides pricing behind a PDF or relies on heavy client-side JavaScript may work for human visitors. An AI agent encountering the same page may simply move on to a competitor. Semrush frames this as a filtering problem: agents evaluate multiple sites, extract structured information, and narrow down options. Sites that present information clearly survive the cut.

The llms.txt check is worth noting. While llms.txt is still an emerging convention (not a formal standard), Semrush flagging its absence signals that the file is becoming part of the expected technical SEO baseline for AI readiness.

For e-commerce and SaaS sites with pricing pages, feature comparisons, and signup flows, the practical risk is real. If agents can’t extract your product details programmatically, you lose consideration before a human ever sees your brand.

What to do

Run the AI Search audit in Semrush Site Audit. Launch a crawl, then check your AI Search Health score. Review the “Blocked from AI Search” widget to see if you’re blocking GPTBot, ClaudeBot, or other AI user agents in robots.txt. Unblock them for pages you want AI agents to access.

Check the AI Search filter under Issues. Look for flagged problems: missing anchor text, orphan-like pages with a single internal link, and the missing llms.txt warning. Prioritize fixing access issues on your most important commercial pages.

Audit your server logs for AI bot activity. Use Semrush’s Log File Analyzer or your own log analysis setup. Filter for GPTBot, ChatGPT-User, OAI-SearchBot, and ClaudeBot. If these bots aren’t hitting your key pages, you have a discovery problem to solve before worrying about content structure.

Identify and review your key pages. List the URLs that explain what you offer, your pricing, and your conversion paths (demo requests, signups, contact forms). For each page, confirm that the essentials are explicitly present in the HTML:

- What you offer

- Who it’s for

- How it’s different

- What the next step is

Reduce barriers to machine readability. Avoid burying key information in PDFs, images without alt text, or JavaScript-rendered content that requires execution to access. Use clear headings that match the topic of each section. Break dense text into short paragraphs or lists. Keep related information grouped so sections can stand alone.

These structural principles align with Schema.org best practices and Google’s documentation on how search works. Clean HTML, logical heading hierarchies, and crawlable content have always mattered. AI agents just raise the penalty for getting them wrong.

Watch out for

Over-blocking in robots.txt. Many sites added blanket blocks for AI crawlers in 2024–2025 to prevent training data scraping. If you still have those blocks in place, they also prevent AI agents from accessing your content during real-time search workflows. Review your robots.txt rules and consider allowing access on commercial pages you want agents to find.

Assuming AI visibility equals agent readiness. Appearing in an AI Overview is not the same as being usable by an autonomous agent. A page can surface in a summary but still fail when an agent tries to extract structured pricing or navigate a signup flow. Test your key pages from the perspective of a machine reader, not just a search result.