Noindex vs. robots.txt disallow for millions of stub pages

Summary

A TechSEO discussion compared noindex vs. robots.txt disallow for millions of low-value stub pages on news sites trying to preserve crawl budget.

Noindex is more precise when you want pages out of search results, since robots.txt disallow only stops crawling, not indexing. But verify you actually have a crawl budget problem before making large-scale changes.

Check server logs first to confirm Googlebot is wasting time on stub pages, then use noindex for empty author and tag pages while auditing internal links that drive crawl demand.

What happened

A r/TechSEO discussion about managing crawl budget on a large news site sparked debate over whether tag and author stub pages should be blocked via robots.txt or handled with noindex. The original post has since been deleted, but the thread’s responses reveal a common tension for news sites with millions of low-value URLs.

The core question was whether tag pages and empty author pages should be disallowed in robots.txt. Practitioners in the thread offered different approaches, but converged on a key point: confirm you actually have a crawl budget problem before making changes.

Why it matters

News sites generate stub pages at an enormous rate. Every new tag, author profile, or taxonomy page creates a URL that Googlebot will eventually discover and attempt to crawl. For sites with millions of these pages, the concern is that Googlebot spends its crawl budget on low-value URLs instead of fresh articles.

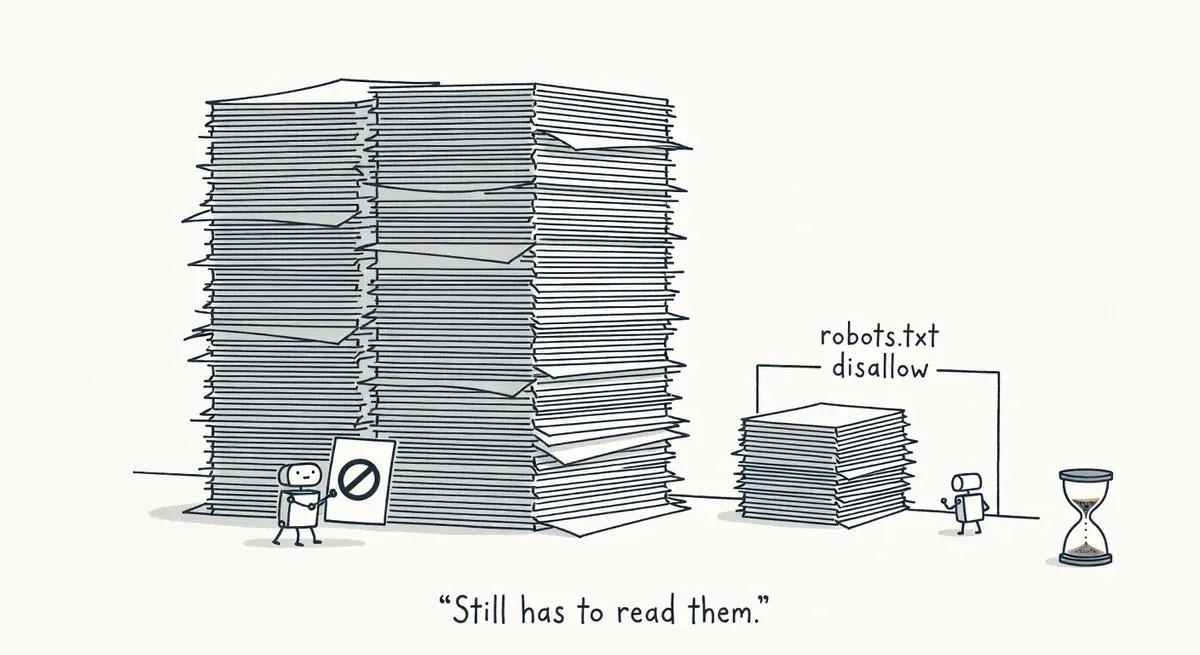

The choice between noindex and robots.txt disallow has real consequences. Blocking a URL via robots.txt prevents Google from crawling it, but Google can still index the URL if it finds links pointing to it. The URL may appear in search results with no snippet. Adding a noindex meta tag requires Google to crawl the page at least once to see the directive, but it reliably removes the page from the index.

Google’s documentation on how Search works confirms that crawling and indexing are separate stages. A robots.txt disallow stops crawling but not indexing. If the goal is to keep stub pages out of search results, noindex is the more precise tool.

One practitioner in the thread, rykef, recommended noindex for stub pages and suggested starting with a linking analysis. Their reasoning: news sites are aggressively crawled, so identifying which pages get discovered quickly matters more than broad blocking rules. Changes that affect many pages at once will move the needle more than general site health fixes.

Another commenter, AbleInvestment2866, drew a distinction between tag pages and author pages. Tags should generally be blocked or noindexed. Author pages are worth keeping if they have real content, but empty ones should be handled. They also raised a critical caveat: unless you can confirm there is actually a crawl budget problem, leaving things alone may be safer. Making large-scale changes to a news site’s URL structure carries risk.

What to do

Verify the problem exists before acting. Check server logs to confirm Googlebot is spending disproportionate time on stub pages. The Screaming Frog Log File Analyser can process millions of log events and show exactly which URLs bots are crawling and how frequently. Google Search Console’s crawl stats report also shows crawl activity by response code and page type.

Use noindex instead of robots.txt disallow when you want pages out of the index. A robots.txt disallow prevents crawling but not indexing. If Google discovers a disallowed URL through internal links or sitemaps, it can still index the URL without a snippet. Noindex requires one crawl to process but then reliably removes the page.

Audit internal linking to stub pages. On news sites, tag and author pages often receive thousands of internal links from article footers and sidebars. Reducing internal link signals to empty stub pages can decrease how aggressively Googlebot crawls them. Prioritize changes that affect the largest number of pages.

Distinguish between page types. Empty tag pages and author profiles with no content are safe candidates for noindex. Author pages with bios, article lists, and E-E-A-T signals may be worth keeping indexed. Apply rules by page type, not with a single blanket directive.

Stage the rollout. As one practitioner in the thread noted, there are more ways to make things worse than better on a site this size. Apply noindex to one category of stub pages first, monitor crawl behavior and indexing for two to four weeks, then expand.

Watch out for

Robots.txt blocking pages that already have noindex. If you disallow a URL in robots.txt and also add a noindex tag, Google cannot crawl the page to see the noindex directive. The robots.txt block takes priority, and the page may remain indexed. Pick one approach per URL pattern.

Sitemaps including noindexed URLs. If your sitemap generator automatically includes tag or author pages, noindexed URLs will keep appearing in sitemaps. Googlebot may continue requesting them. Exclude noindexed URL patterns from sitemap generation to avoid sending mixed signals.