GSC reports resource failures despite 200 OK in server logs

Summary

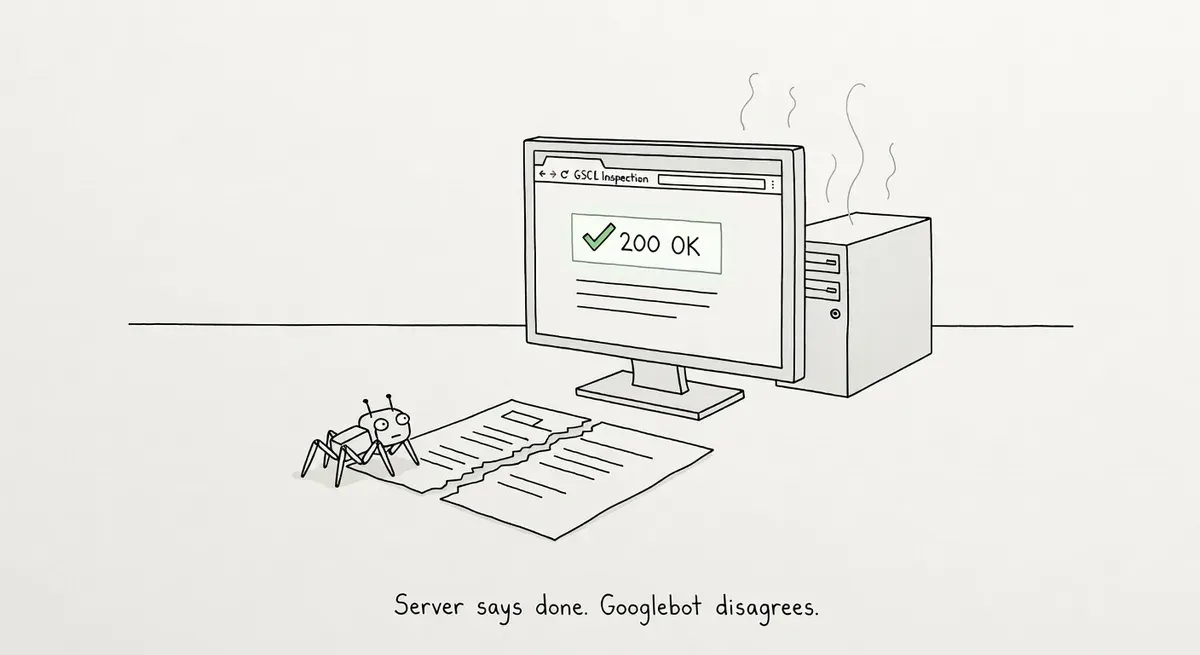

Google Search Console is reporting resource loading failures on pages that show HTTP 200 responses in server logs, creating confusion about whether the issue is real.

Resource failures in GSC affect rendering and indexing. The disconnect often stems from CDN/firewall interception, timing gaps between initial crawl and rendering service requests, or requests from non-Google bots that your logs show as 200s.

Verify resource URLs in GSC's URL Inspection tool, check CDN logs and bot IP ranges, confirm requests are genuine Googlebot via reverse DNS, and audit robots.txt for blocked asset paths.

What happened

A practitioner in r/bigseo reported that Google Search Console is flagging pages with “Page resources couldn’t be loaded / Other error” warnings, even though their server logs show Googlebot receiving HTTP 200 responses for all requests.

The thread describes a debugging scenario many practitioners will recognize: GSC says something is broken, your logs say everything is fine, and you’re left trying to reconcile the two.

Why it matters

Resource loading failures in GSC affect how Google renders pages. If Googlebot can’t load CSS, JavaScript, or image resources during rendering, the indexed version of your page may be incomplete. Pages that rely on client-side rendering are especially vulnerable, since missing JS resources can mean missing content entirely.

The disconnect between server logs and GSC reports is a common source of confusion. Several factors can cause it.

Server logs only record requests that reach your origin server. If a CDN, edge proxy, or firewall intercepts a request before it hits your origin, you’ll see no log entry at all. Googlebot may be getting blocked or rate-limited at a layer you’re not monitoring.

Timing matters too. Google’s rendering service (WRS) fetches resources separately from Googlebot’s initial crawl. The WRS may request resources minutes or hours after the initial HTML fetch. If your server was briefly unavailable, overloaded, or returned an error during that second pass, your access logs for the original crawl would still show 200s.

Another possibility is that the requests flagged in GSC didn’t actually come from Googlebot. Google’s documentation on verifying crawlers describes how to confirm whether a request genuinely originated from Google. Reverse DNS lookups should resolve to googlebot.com or google.com hostnames. If your logs show 200s for requests that weren’t actually from Googlebot, you’re looking at the wrong traffic.

Google also publishes IP range JSON files for its various crawler categories: general crawlers like Googlebot, special-purpose crawlers like AdsBot, and user-triggered fetchers like the URL Inspection tool. Cross-referencing your log IPs against these published ranges can help isolate which Google system is making each request.

What to do

Start by checking whether the resource URLs flagged in GSC are actually reachable by Googlebot. Use the URL Inspection tool’s “View Tested Page” feature to see exactly what Google’s renderer received. Compare the rendered HTML against your source to spot missing resources.

Check your CDN and edge layer logs, not just your origin server. If you use Cloudflare, Fastly, or similar services, look for blocked or challenged requests from Google’s IP ranges. Bot management rules and rate limiting are frequent culprits.

Verify that the requests in your logs are genuinely from Googlebot. Run a reverse DNS lookup on the source IPs:

host 66.249.66.1The result should resolve to a *.googlebot.com or *.google.com hostname. Then run a forward DNS lookup on that hostname to confirm it maps back to the same IP:

host crawl-66-249-66-1.googlebot.comIf the IPs in your logs don’t resolve to Google hostnames, your 200 responses are going to a different bot, not to the real Googlebot that GSC is reporting on.

Check your robots.txt for rules that might block resource paths. A common mistake is disallowing /wp-content/ or /assets/ directories. Googlebot needs access to CSS and JS files to render pages properly.

Finally, review your server’s response times under load. If the WRS requests resources during a traffic spike and gets timeouts, GSC will report failures even if the page itself loaded fine seconds earlier. Server-side caching for static resources can reduce this risk.

Watch out for

CDN bot-management rules silently blocking Google. Many CDN providers apply bot challenges or rate limits that don’t generate origin server logs. You’ll see clean 200s in your logs while Googlebot is actually getting 403s or CAPTCHAs at the edge.

Robots.txt blocking render-critical resources. GSC will report the page itself as loaded but flag resource failures if your robots.txt disallows paths to CSS, JS, or font files. The URL Inspection tool’s robots.txt test only checks the page URL, not every sub-resource.