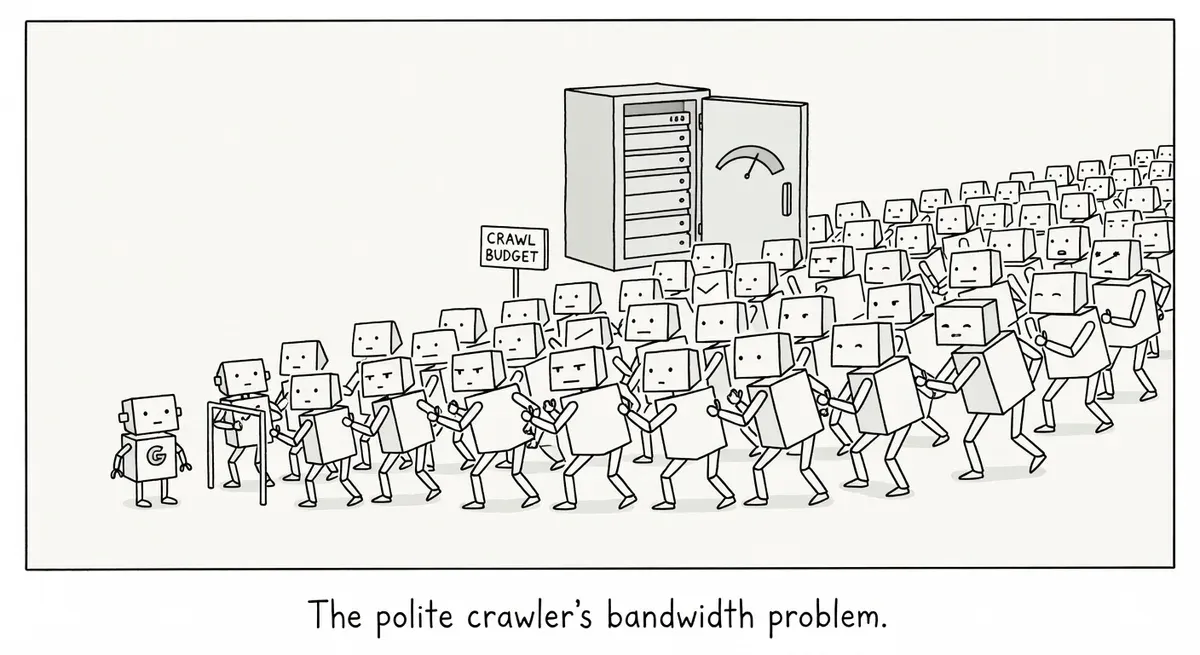

AI bot traffic starves Googlebot of crawl budget on large sites

Summary

AI crawler traffic surged in 2025 and is consuming server bandwidth, crowding out Googlebot, and destabilizing infrastructure on large sites. When AI bots throttle Googlebot's crawl budget, fewer pages get indexed and discovered, directly harming organic visibility. Audit your bot traffic, block unwanted AI crawlers in robots.txt, rate-limit aggressive bots with 429 responses, and prioritize Googlebot access in your infrastructure.

What happened

AI bot traffic grew sharply across 2025, and Botify reports that the surge is creating real infrastructure problems for large websites. Crawlers from companies like OpenAI and Anthropic are consuming bandwidth, destabilizing servers, and crowding out the search engine bots that actually drive organic visibility.

The problem is straightforward. Every bot request consumes server resources regardless of intent. When AI crawlers pile on top of existing search engine crawlers, monitoring tools, and malicious scrapers, total request volume can exceed what a site’s infrastructure was built to handle.

Botify flags five specific consequences: bandwidth consumption, uptime and stability risk, security exposure, analytics pollution, and unpredictable cost increases.

The Wikimedia Foundation offers a concrete example. The organization reported bandwidth surges of over 50% last year as AI crawlers scraped content for LLM training. When baseline bandwidth gets eaten by AI bots, less remains for human visitors and for Googlebot.

Why it matters

Crawl budget is finite. If AI bots are hammering your servers, Googlebot may get throttled or deprioritized before it reaches important pages. For large sites with millions of URLs, this can directly reduce the number of pages Google discovers and indexes.

The stability risks compound the problem. Sudden bot traffic bursts force servers to scale up and down unpredictably, which degrades caching and increases error rates during deployments. Diagnosing issues becomes harder because traffic patterns no longer reflect normal user behavior.

Analytics reliability also takes a hit. Botify notes that ChatGPT prompts have appeared in Google Search Console data as search queries, muddying keyword analysis. While OpenAI has claimed this specific issue is resolved, it illustrates how AI platform activity can quietly distort the data SEOs depend on.

The cost dimension is harder to pin down. Botify acknowledges that exact figures depend on each site’s architecture, but the pattern is consistent: unplanned bot traffic drives unplanned infrastructure costs.

What to do

Audit your bot traffic first. Check server logs to understand which AI crawlers are hitting your site, how frequently, and which URLs they target. You cannot manage what you have not measured. Look for user-agent strings from GPTBot, ClaudeBot, and other known AI crawlers.

Block unwanted AI crawlers in robots.txt. If specific AI bots provide no value to your business, disallow them. A simple addition handles this:

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /If you want AI crawlers to access some content but not all, use targeted disallow rules for high-volume sections. Moz’s robots.txt guide covers the syntax in detail.

Use the noai and noimageai robots meta directives where supported. Google’s robots meta tag documentation explains how page-level meta tags work. Some AI crawlers honor these directives, though compliance varies.

Implement HTTP 429 responses for aggressive crawlers. RFC 6585 defines the 429 “Too Many Requests” status code, which tells a client it has sent too many requests. Configure your server or CDN to return 429 responses when bot traffic exceeds acceptable thresholds. Cloudflare and similar CDN providers offer bot management features that can help automate this.

Separate bot traffic in your analytics. Filter known bot user agents from your web analytics and GSC analysis. Treating bot-inflated numbers as real user signals will lead to bad decisions.

Prioritize Googlebot access. If you must throttle overall bot traffic, make sure Googlebot and Bingbot are allowlisted. These are the crawlers that feed your organic search visibility. Everything else is secondary unless you have a specific reason to allow it.

Watch out for

Not all AI crawlers identify themselves. Some bots use generic or misleading user-agent strings. Robots.txt rules only work against crawlers that declare who they are and choose to obey the file. Server-side rate limiting based on behavior patterns catches what robots.txt cannot.

Blocking too aggressively can backfire. If your brand benefits from appearing in AI-generated answers, blanket-blocking all AI crawlers removes you from those results. Decide which AI platforms matter to your traffic before setting disallow rules.