Schema markup does not influence LLM parsing

Summary

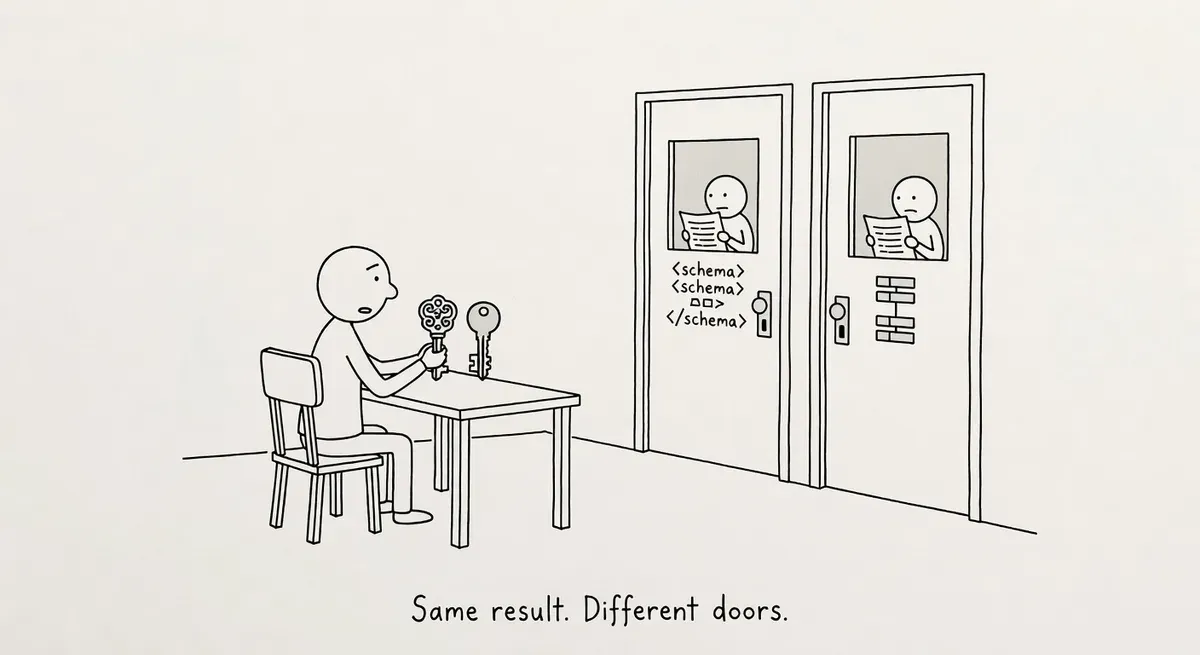

Pedro Dias analyzed vendor claims that schema markup helps AI models parse content and found the core argument flawed for the training layer. Transformer models read text as token sequences, not structured data tags, so schema does not influence how LLMs process language during pre-training.

The picture differs for AI search and agent bots. Schema may have value at the retrieval layer (document selection) and for agents that need structured product data to complete tasks. Reject vendor claims about schema helping LLMs 'understand' content, but implement schema for its documented purposes and its potential value to retrieval and agent systems.

What happened

Pedro Dias published an analysis in Search Engine Journal arguing that schema markup plays no role in how large language models parse web content. The piece takes direct aim at vendor claims that structured data “ensures AI engines can parse and connect your content.”

Dias’s central argument is architectural. Transformer models, as described in the foundational “Attention Is All You Need” paper by Vaswani et al., process language as sequences of tokens. There is no parser inside the model looking for schema tags or FAQ markup. The model reads the words. Pre-training data is the public web, and the public web has never been structured.

The article names three vendors making variations of the same claim. Semrush’s “Technical GEO” pillar presents schema and structured data as ensuring AI engines can parse content. AirOps published a graphic claiming specific percentage lifts from schema and heading changes, but those numbers trace back to its own report, creating a self-citation loop.

Peec AI’s GEO guide acknowledges the probabilistic nature of LLMs but lands on the same prescriptions: heading hierarchy, bullet lists, FAQ markup, and multiple schema types per page.

Why it matters

The distinction Dias draws is between what schema actually does and what vendors claim it does. Schema.org markup has well-defined functions: powering rich results in classical search, supporting entity disambiguation in the knowledge graph, and helping voice assistants pull structured fields. None of those functions involve LLM text comprehension.

The practical risk for SEO teams is misallocated effort. If practitioners spend time layering schema types onto pages specifically to improve AI search visibility, they are working against a mechanism that does not exist in the model architecture.

The argument is architecturally correct for training, but the picture differs across the four types of AI bot that access websites.

Training crawlers like GPTBot and ClaudeBot process the public web as token sequences during pre-training. Schema tags are not parsed as structure. Dias is correct here.

Search and retrieval bots like OAI-SearchBot and PerplexityBot use a retrieval layer to select documents before the model generates an answer. Whether that retrieval layer uses structured data for document selection is an open question the article’s own gotcha acknowledges but does not develop.

User-action fetchers like ChatGPT-User and Claude-User fetch pages in real time when a user asks the model to read a URL. JSON-LD containing product data, pricing, or FAQs could help these bots extract structured information from the page.

AI browsers and agents like Operator, Atlas, and Mariner navigate pages to complete tasks such as purchases or form submissions. Structured data describing products, availability, and pricing has direct utility for agents that need to understand what a page offers.

When evaluating any claim about schema and AI, ask which layer it affects: training, retrieval, or action. The answer differs for each.

The self-citation problem Dias identifies in vendor research deserves attention. When a vendor’s “data-backed” claims trace back to the vendor’s own report, the evidence loop is closed. SEO teams building GEO strategies on those numbers are building on unverified foundations.

Google recently began surfacing AI Mode traffic data in Search Console, giving practitioners real performance signals. Actual click and impression data from GSC is more reliable for measuring AI search performance than vendor infographics.

What to do

Reject vendor claims that schema helps LLMs “parse” or “understand” your content. That mechanism does not exist in transformer architecture. But do not conclude schema is irrelevant to AI. Schema has documented value in classical search (rich results, knowledge graphs, voice assistants) and may have undocumented value at the retrieval and agent layers described above.

Implement schema for what it demonstrably does, not for what GEO vendors claim. If you already have Product, Article, or FAQPage markup, keep it. If you are deciding where to invest next, prioritize structured data that helps agents complete tasks (product pricing, availability, specifications) over decorative schema types layered for “AI visibility.”

Audit any GEO or AEO strategy your team has adopted. Check whether the recommended tactics trace back to independent research or to the vendor selling the solution. If the methodology leads back to the vendor’s own report, weight those claims accordingly.

Use Search Console’s AI Mode data to measure what actually drives AI search traffic to your site. Real performance data from GSC beats vendor infographics with self-sourced percentages.

Watch out for

Conflating retrieval with generation. Some vendor claims may hold partial truth at the retrieval layer (how a RAG system selects source documents) rather than the generation layer (how the LLM processes text). Dias acknowledges Peec AI makes this distinction. The problem is when vendors blur the two and present retrieval-layer tactics as though they affect how the model “understands” content.

Self-citing vendor research. Any study claiming specific percentage lifts from schema or heading changes should be checked for methodology independence. If the vendor produced the study, ran the tests, and sells the solution, treat the numbers as marketing until independently replicated.