Google's Web Bot Auth adds cryptographic bot identity

Summary

Google released Web Bot Auth, an experimental protocol that lets bots prove their identity with cryptographic signatures instead of spoofable user-agent headers.

It only covers some AI agents on Google infrastructure right now, and not every request is signed yet.

No action needed today. Keep your existing bot verification in place. If you want to prepare, read the docs and start logging signed requests where they appear.

What happened

Google published developer documentation for Web Bot Auth, a new cryptographic protocol that lets bots sign their HTTP requests. The protocol is experimental. Google is testing it with some AI agents hosted on its own infrastructure.

SE Roundtable reported the launch on May 5, 2026.

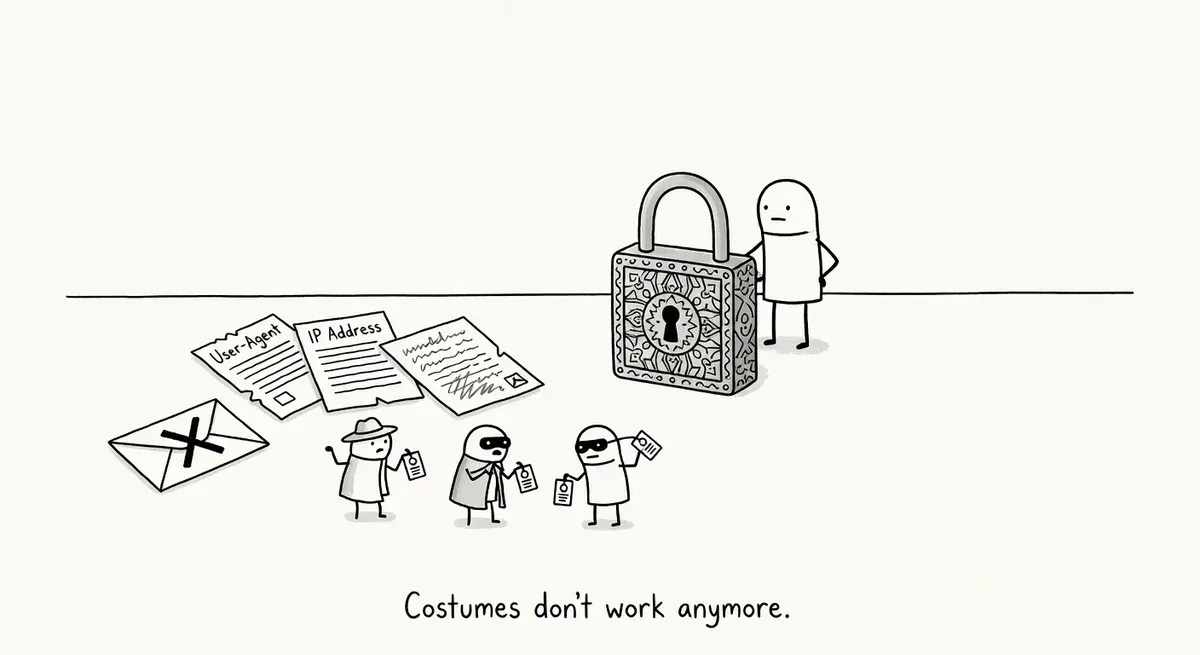

Web Bot Auth replaces the current trust model where sites verify bots through self-reported user-agent headers and reverse DNS lookups against known IP ranges. Instead, agents cryptographically sign their requests, giving site owners a way to confirm that traffic genuinely comes from the claimed bot provider.

Google’s documentation describes three benefits:

- Cryptographic certainty: Verified identity that moves beyond spoofable headers and decouples agent identity from IP addresses.

- Better observability: Clearer data on how agents interact with your content.

- Future-proofing: A foundation for mutual trust between agent providers and websites.

The protocol is based on an IETF Internet Draft and builds on HTTP Message Signatures (RFC 9421), a proposed standard for signing HTTP messages. Google explicitly notes that not all Google user agents use Web Bot Auth yet, and even agents that do support it are not signing every request.

Why it matters

Bot spoofing is a real and growing problem. The Imperva 2026 Bad Bot Report highlights the increasing difficulty of distinguishing legitimate automated traffic from malicious bots, especially as agentic AI blurs the lines. Current verification methods have clear weaknesses. User-agent strings are trivially faked. Reverse DNS verification is more reliable but ties identity to IP ranges, which creates maintenance headaches as providers scale infrastructure.

Cryptographic signing addresses both problems at once. A valid signature proves the request came from an entity holding the private key, regardless of which IP address sent it.

The timing matters too. AI agents that browse the web on behalf of users are multiplying fast. Google testing the protocol with its own AI agents signals that it expects agent-to-site authentication to become standard plumbing. If Web Bot Auth or something like it gains adoption, sites could make granular access decisions per agent with high confidence in the claimed identity.

For site owners who already manage crawler access through robots.txt and IP allowlists, the protocol offers a potential upgrade path. Instead of maintaining IP range lists that change when providers update infrastructure, you could verify a cryptographic signature against a published public key.

What to do

No immediate action is required. The protocol is experimental and Google is not yet signing all requests.

That said, practitioners managing bot access policies should familiarize themselves with Google’s Web Bot Auth documentation. Understanding the request-signing flow now will save time if adoption accelerates.

Keep your existing bot verification methods in place. Google’s documentation explicitly says to continue using established methods like reverse DNS verification. Web Bot Auth is additive, not a replacement, during the experimental phase.

If you run a site that gates content or enforces differential access for AI crawlers versus search crawlers, watch how the protocol develops. The ability to verify bot identity cryptographically would make those access controls more reliable than header-based checks.

For teams building server-side middleware or WAF rules, RFC 9421 (HTTP Message Signatures) is the underlying standard worth reading. Any future implementation will involve validating signatures against that spec.

Watch out for

Do not drop existing verification. Google warns it is not signing every request, even from agents that support the protocol. If you switch to signature-only verification now, you will block legitimate Google traffic that arrives unsigned.

Protocol scope is narrow for now. Only some AI agents on Google infrastructure are participating. Googlebot for search indexing is not mentioned as a current participant. Do not assume all Google crawlers will send signed requests in the near term.