Managed WordPress hosts silently block AI crawlers

Summary

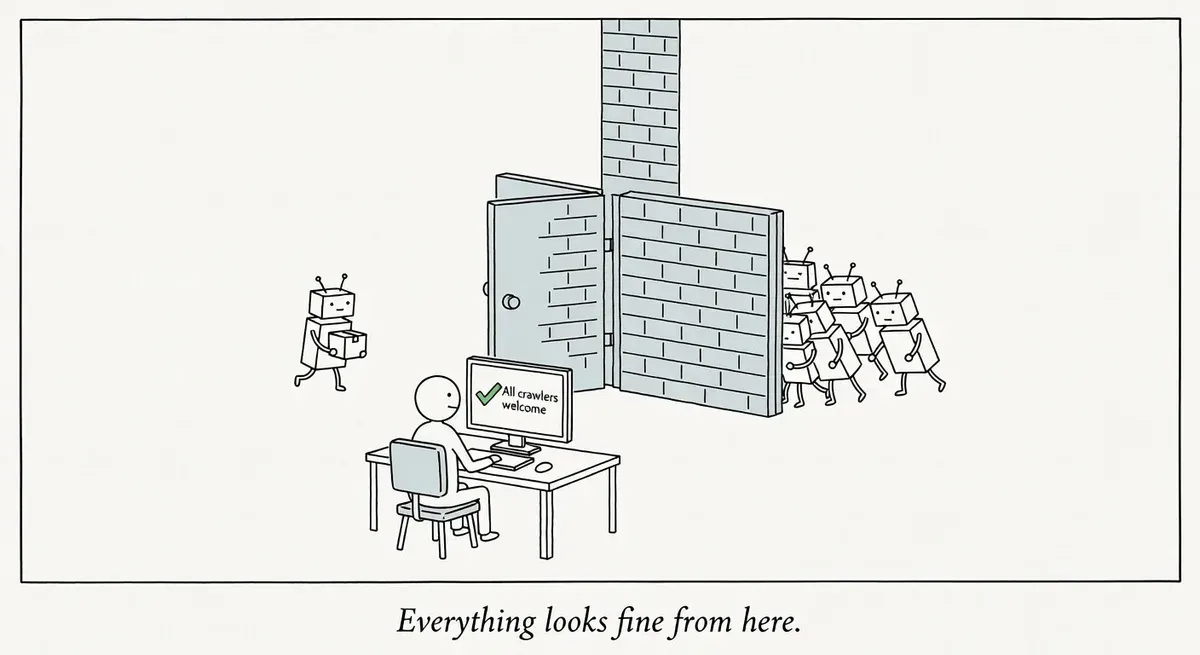

Managed WordPress hosts are silently blocking AI crawlers at the server level, often without site owners' knowledge or consent.

AI search products distinguish between training crawlers and search crawlers, blocking one type loses visibility in ChatGPT search or Claude results, while blocking both eliminates organic AI traffic entirely. Most publishers should allow search crawlers while blocking training bots.

Check your live robots.txt for disallow rules targeting GPTBot, OAI-SearchBot, ClaudeBot, Claude-User, Claude-SearchBot, and PerplexityBot; contact your host if blocks exist.

What happened

Several managed WordPress hosting platforms are blocking AI crawlers by default, according to a report from Search Engine Land. Site owners on these platforms may not realize their content is invisible to AI-powered search products like ChatGPT search, Perplexity, and Claude’s web features.

The blocks typically happen at the server or platform level through robots.txt rules or firewall configurations that the site owner never opted into. Because the blocking is silent, many publishers only discover it after noticing their content missing from AI search results.

Why it matters

AI search products now use distinct crawler user agents, each controlling a different type of access. Blocking them has real consequences that vary by bot.

OpenAI’s documentation lists four bots:

- GPTBot crawls content for training generative AI models. Blocking it prevents your content from entering training datasets.

- OAI-SearchBot surfaces websites in ChatGPT search results. Sites that block OAI-SearchBot won’t appear in ChatGPT search answers, though they can still show as navigational links.

- OAI-AdsBot crawls pages to assess ad placement quality. Relevant for sites running OpenAI-powered ad integrations.

- ChatGPT-User fetches pages in real time when a ChatGPT user asks the model to browse a specific URL. OpenAI notes that because these fetches are user-initiated, robots.txt rules may not apply. Blocking via robots.txt is not guaranteed to prevent access.

- ClaudeBot gathers content for model training.

- Claude-User retrieves content when a user asks Claude a question. Blocking it reduces your site’s visibility in user-directed web search.

- Claude-SearchBot indexes content to improve search result quality.

Perplexity similarly documents separate crawler user agents with independent robots.txt controls.

The distinction between training crawlers and search crawlers is the crux of the issue. A site owner might reasonably want to block training bots while keeping search bots enabled. Platform-level blocking removes that choice entirely.

For publishers who depend on organic traffic, blanket AI bot blocking could mean losing visibility in a growing channel. For e-commerce sites using product feeds or content marketing, the impact compounds as AI search usage grows.

What to do

Check whether your managed WordPress host is blocking AI crawlers. The fastest method is to inspect your live robots.txt file at yourdomain.com/robots.txt and look for disallow rules targeting these user agents:

GPTBotOAI-SearchBotOAI-AdsBotChatGPT-UserClaudeBotClaude-UserClaude-SearchBotPerplexityBot

If your host manages robots.txt at the server level, you may not see these rules in your WordPress settings. Check the live file directly via browser or curl.

Contact your host’s support team if you find unexpected blocks. Ask whether they apply AI crawler restrictions at the server, CDN, or WAF level. Some platforms use Cloudflare or AWS WAF rules that block bot traffic before it reaches your robots.txt.

Decide which bots you actually want to allow. A reasonable starting position for most publishers is to block training crawlers (GPTBot, ClaudeBot) while allowing search and browsing crawlers (OAI-SearchBot, Claude-SearchBot, Claude-User, PerplexityBot). Note that ChatGPT-User may not respect robots.txt because its fetches are user-initiated. OpenAI also notes that if a site allows both GPTBot and OAI-SearchBot, they may use results from a single crawl for both purposes, which means allowing OAI-SearchBot could inadvertently contribute to training data.

Be aware that robots.txt changes can take up to 24 hours to propagate across both OpenAI’s and Perplexity’s systems.

Watch out for

WAF-level blocks hiding behind a clean robots.txt. Your robots.txt may look fine, but your host’s web application firewall could be dropping AI crawler requests before they reach your server. Test by checking server access logs for AI bot user agents. If you see zero requests from any AI crawler, a firewall rule is likely responsible.

Platform updates re-applying blocks. Even if you override your host’s default settings, platform updates may reset them. Document your intended crawler access policy and audit it quarterly.