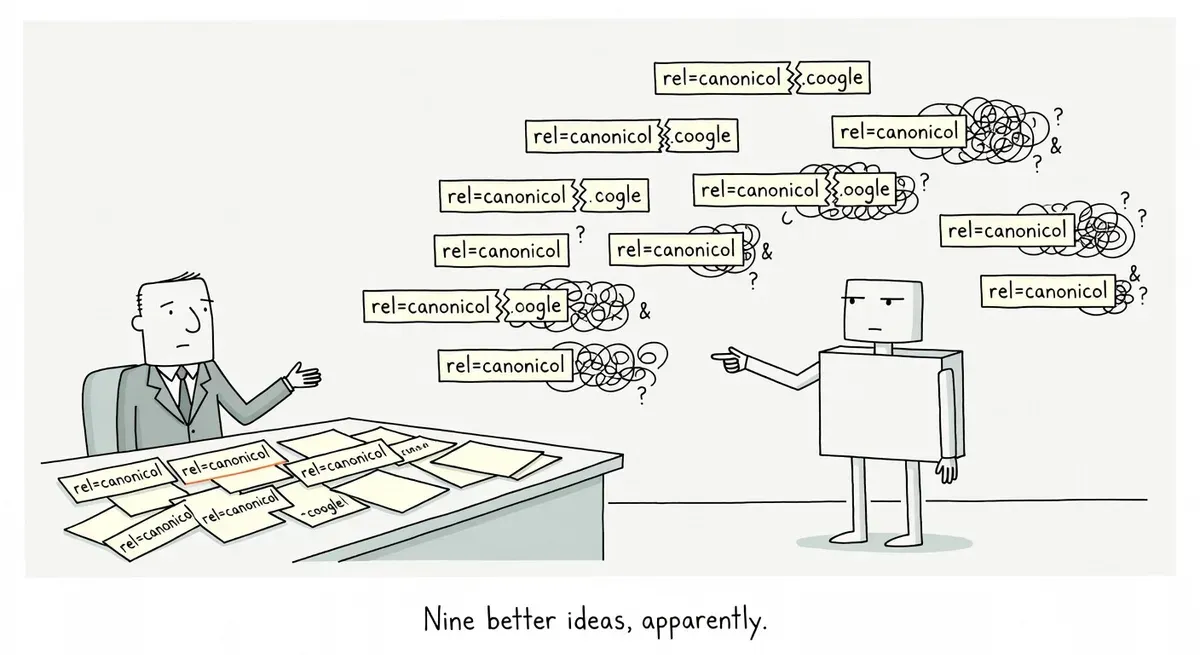

Mueller lists nine reasons Google overrides your rel=canonical

What happened

Google’s John Mueller shared nine distinct reasons why Google chooses one URL as canonical over another, even when site owners have set rel=canonical. The explanation came in a Reddit thread covered by Search Engine Journal, where a user asked Mueller to explain why Google sometimes picks the “wrong” canonical when two pages cover different topics.

Mueller prefaced the list by noting that no tool exists to tell you why something was considered duplicate. He said practitioners “often get a feel for it” over the years, but acknowledged the reasons aren’t always obvious.

The nine scenarios he listed:

- Exact duplicate content. The pages are fully identical, leaving no signal to distinguish one URL from another.

- Substantial duplication in main content. A large portion of the primary content overlaps, such as the same article appearing in multiple places.

- Too little unique content relative to template content. The page’s unique content is minimal, so repeated elements like navigation and menus dominate. The pages end up looking effectively the same.

- URL parameter patterns inferred as duplicates. When parameterized URLs like

/page?tmp=1234and/page?tmp=3458return the same content, Google may generalize the pattern. Mueller noted this gets tricky with multiple parameters, asking rhetorically whether/page?tmp=1234&city=detroitwould also be treated the same. - Mobile version used for comparison. Google evaluates the mobile version, not the desktop version. People who manually check on desktop may see different content than what Google is comparing.

- Googlebot-visible version used for evaluation. Canonical decisions are based on what Googlebot actually receives, not what users see in a browser.

- Serving Googlebot alternate or non-content pages. Bot challenges, pseudo-error pages, or other generic responses shown to Googlebot may match previously seen content and trigger duplicate treatment.

- Failure to render JavaScript content. When Google can’t render the page, it falls back to the base HTML shell. If that shell is identical across pages, duplication gets triggered.

- Ambiguity or misclassification in the system. A URL may be treated as duplicate because it appears “misplaced” or because of limitations in how Google’s system interprets similarity.

Why it matters

The rel=canonical attribute is one of the strongest signals site owners can send to Google about their preferred URL. But as Google’s own documentation makes clear, it’s still a hint, not a directive. Google’s docs list redirects as a strong signal, rel=canonical as a strong signal, and sitemap inclusion as a weak signal, but none of them are required or guaranteed to work.

Mueller’s list explains the gap between what SEOs expect and what Google does. Several of the nine reasons point to problems that are invisible from a desktop browser. Mobile rendering differences, Googlebot-specific responses, and JavaScript rendering failures all produce a version of the page that the site owner never sees during manual review.

The URL parameter inference scenario is particularly relevant for e-commerce and large sites with faceted navigation. Google may correctly identify that most parameter variations are duplicates, then incorrectly apply that pattern to a parameter combination that actually produces unique content.

The “too little unique content relative to template” scenario catches thin pages on sites with heavy global navigation. A short blog post surrounded by a large shared header, footer, and sidebar may look nearly identical to another short post when Google compares them.

What to do

Check your canonical overrides in Google Search Console under the “Pages” report. Filter for “Duplicate, Google chose different canonical than user.” That report shows you where Google is actively disagreeing with your rel=canonical.

For each flagged URL, work through Mueller’s list as a diagnostic checklist:

- Fetch as Googlebot. Use the URL Inspection tool to see what Google actually receives. Compare that to what you see in a browser. Look for bot-detection pages, interstitials, or empty content areas.

- Check the mobile version. Google uses mobile-first indexing. If your mobile page strips content that exists on desktop, Google may see two pages as more similar than they actually are.

- Test JavaScript rendering. View source on the raw HTML Google receives before rendering. If your unique content loads via client-side JavaScript and rendering fails, Google sees only the template shell. The URL Inspection tool’s “View Rendered Page” screenshot can confirm whether content appeared.

- Audit parameter URLs. If you use query parameters, check whether Google is collapsing parameter variations that should remain distinct. Look at indexed URLs in Search Console to see which parameter combinations Google has kept.

- Measure content-to-template ratio. Pages with very little unique text surrounded by large shared templates are at risk. Adding more substantive unique content is the direct fix.

Stacking multiple canonicalization signals helps. Google’s documentation notes that combining methods like redirects, rel=canonical, and sitemap inclusion increases the chance your preferred canonical is respected.

Watch out for

Parameter inference spreading too far. Google may correctly learn that one type of parameter creates duplicates, then apply that pattern to a different parameter on the same domain. Sites with mixed parameter types (some cosmetic, some content-changing) are most exposed.

Bot-detection tools creating accidental duplicates. If your security or bot-management layer serves a challenge page or generic response to Googlebot, every affected URL looks identical. The result is mass duplicate classification that has nothing to do with your actual content.