AI search scores passages, not pages, killing pillar content

Summary

AI search engines score individual passages, not full pages, when selecting citations. This breaks traditional ranking logic, long pillar content suffers because each passage competes independently against AI-generated sub-queries you can't predict.

Audit content at the passage level and make sure each section stands alone as a complete answer. Front-load key claims early in paragraphs so cross-encoder models pick up your main point.

What happened

AI search engines score individual passages rather than full pages when deciding what to cite, according to a Sitebulb webinar featuring Dan Petrovic (founder of DEJAN agency) and Jes Scholz (growth marketing consultant). The session broke down the multi-step pipeline that runs between a user’s prompt and the citations that appear in an AI-generated response.

Petrovic described a four-stage process:

- Query reformulation. The system takes the user’s prompt and generates multiple synthetic search queries. These aren’t what the user typed. They’re what the system decides it needs to answer the question. A single prompt might produce two to six separate queries, each returning its own result set.

- Shortlisting. From several hundred results across those queries, the system filters down to a handful through re-ranking.

- Passage-level relevance scoring. For each shortlisted page, the system pulls the cached version and scores individual passages against the query using a cross-encoder model. Petrovic described cross-encoders as models that embed both the query and a target text chunk together, then score the pair for relevance.

- Grounding snippet creation. The most relevant passages get extracted into “grounding snippets” that feed into the language model’s context window alongside the reformulated queries.

The language model then synthesizes its response from those snippets and attaches citations back to the grounding sources. Petrovic noted that the model also carries biases from pre-training and fine-tuning, meaning the grounding snippets compete with whatever the model already “knows.”

If a user continues the conversation, the entire pipeline repeats for each follow-up.

Why it matters

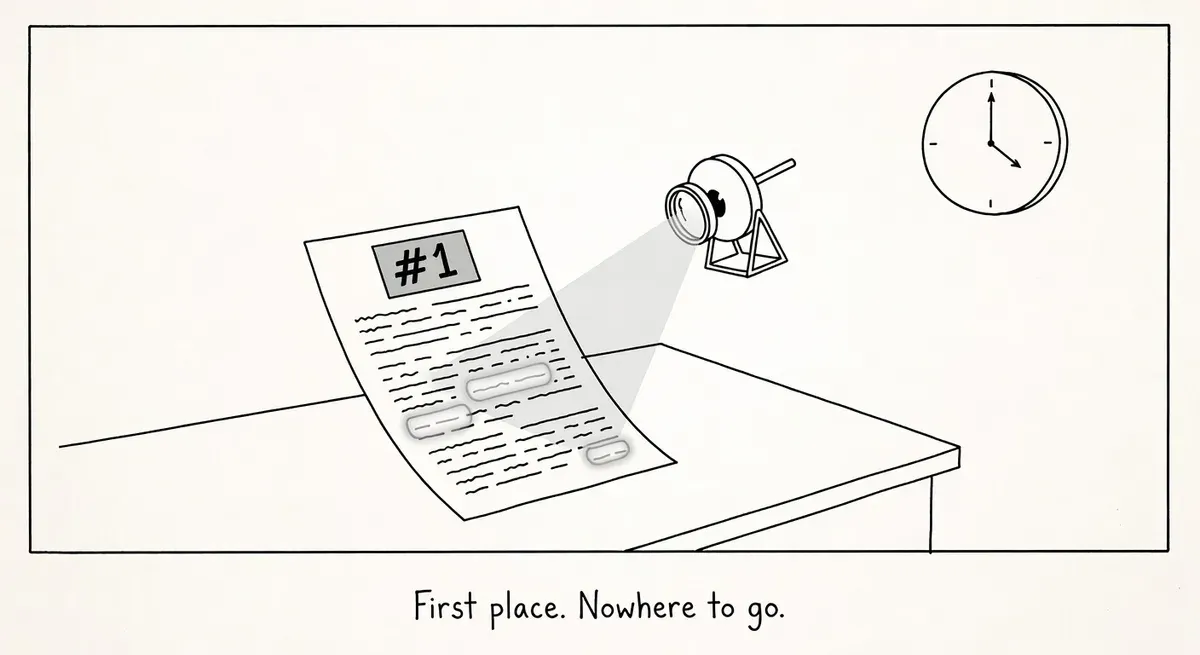

The passage-level scoring step changes what “ranking” means in AI search. A page can rank well in traditional search and still get zero citations if no single passage on that page scores highly enough against the reformulated queries.

Long-form pillar content is particularly exposed. A 3,000-word guide covering ten subtopics might rank for dozens of keywords in classic search. But in the AI pipeline, each passage competes independently. If no individual section is the best answer for the specific sub-query the system generated, the page gets passed over entirely.

Cross-encoder scoring works differently from the bi-encoder models used in standard dense retrieval (the kind documented in toolkits like Pyserini). Bi-encoders embed queries and documents separately, then compare them. Cross-encoders embed both together, producing more accurate but computationally expensive relevance scores. The practical effect is that passage quality matters more than page-level authority signals.

The query reformulation step adds another wrinkle. You don’t control which synthetic queries the system generates from a user’s prompt. Your content needs to match sub-queries you can’t predict or see in Search Console.

What to do

Audit your content at the passage level. Read each section of your key pages as if it were a standalone answer. Does it make a clear, complete claim that directly responds to a question? Passages that meander or require surrounding context to make sense will score poorly against a focused sub-query.

Tighten long-form content. If you have pillar pages covering many subtopics, check whether each section could stand alone as a strong answer. Sections that serve only as transitions or brief overviews are unlikely to score well in passage-level evaluation. Consider whether breaking them into focused pages would produce better individual passages.

Front-load key claims within sections. Cross-encoder models score chunks of text. If your main point appears in paragraph four of a section after three paragraphs of setup, the chunk containing the setup may be what gets evaluated. Put the answer first, then the explanation.

Write for sub-queries you can’t see. Think about how a system might decompose a broad question into specific sub-queries. If someone asks “best CRM for small businesses,” the system might generate sub-queries about pricing, integrations, ease of use, and support. Each of those needs a passage-level answer somewhere in your content.

Don’t abandon traditional SEO. Both Scholz and Petrovic emphasized that the AI search pipeline still starts with traditional search results. Pages that don’t rank in the initial retrieval step never make it to the shortlist. Technical fundamentals like crawlability, indexation, and relevance signals remain the entry ticket.

Watch out for

Grounding snippet ≠ featured snippet. Featured snippets are selected from the top-ranking result for a specific query. Grounding snippets are extracted from multiple pages across multiple reformulated queries. Writing content to win featured snippets is a different task than writing passages that score well under cross-encoder evaluation.

Model bias can override your passages. Even if your passage makes it into the grounding context, the language model’s pre-training biases may steer the synthesized answer toward a different framing. Petrovic noted that you’re “hoping for the best” that the grounding pipeline sends the right signals. Brand mentions across the model’s training data still matter.