Pages ranking in Google can be invisible to AI search

Summary

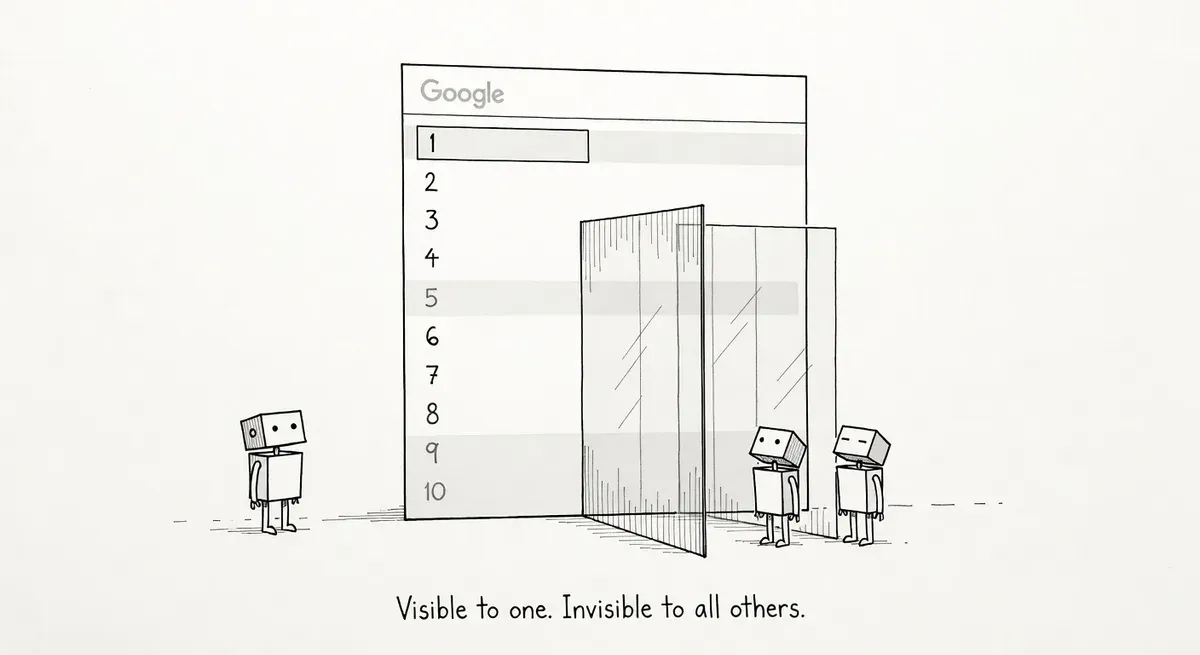

Pages ranking well in Google Search can be invisible to AI search engines like ChatGPT and Perplexity because the ranking signals differ fundamentally between them.

AI crawlers don't execute JavaScript, may ignore your robots.txt rules, and weight structured data differently than Google does. Sites with client-side rendering or outdated crawl directives face the biggest risk of AI search invisibility.

Audit your robots.txt for AI bots, test how pages render without JavaScript, and verify your content appears in ChatGPT Search and Perplexity results directly.

What happened

iPullRank published an AI search audit framework arguing that pages performing well in traditional Google Search can be completely invisible to AI-powered search engines. The framework addresses a growing gap between conventional SEO visibility and discoverability across AI search surfaces like ChatGPT, Perplexity, and Google’s AI Overviews.

The core premise is straightforward: the signals that help a page rank in Google’s organic results are not the same signals that AI search systems use to retrieve, parse, and cite content. A page can sit comfortably on page one of Google while being effectively absent from AI-generated answers.

Why it matters

Traditional Google Search follows a well-documented crawl-index-serve pipeline. Googlebot crawls pages, renders JavaScript, indexes the content, and ranks it against queries. AI search engines operate differently. Many rely on their own crawlers or third-party data pipelines that may not render JavaScript at all, may respect different directives in robots.txt, or may extract content in ways that miss key information.

The practical gap shows up in three areas:

- Rendering: Pages built with client-side JavaScript frameworks may render fine for Googlebot, which has a dedicated rendering pipeline. AI search crawlers often do not execute JavaScript. Content locked behind CSR (client-side rendering) may never reach these systems.

- Crawl access: AI crawlers use different user agents than Googlebot. A robots.txt file that allows Googlebot but blocks or doesn’t account for crawlers like GPTBot, PerplexityBot, or Anthropic’s ClaudeBot will prevent AI search engines from accessing the content.

- Structured data: Schema.org markup helps Google generate rich results, but AI search engines may weight structured data differently or use it as a primary extraction method rather than a supplementary signal. Pages without clear structured data may be harder for AI systems to parse and cite accurately.

Sites that invested heavily in JavaScript-rendered content or that haven’t updated their robots.txt since AI crawlers emerged are most at risk. E-commerce product pages, SaaS documentation, and content-heavy publishers with complex front-end architectures should pay particular attention.

What to do

Audit your robots.txt for AI crawlers. Check whether your robots.txt explicitly allows or blocks known AI user agents. The main ones to account for include OAI-SearchBot (ChatGPT Search), GPTBot (OpenAI training), Google-Extended (Gemini training data), PerplexityBot, Amazonbot, ClaudeBot, and Bytespider. If you want AI search visibility, the retrieval crawlers (OAI-SearchBot, PerplexityBot, ClaudeBot) need access to your content. Training crawlers like GPTBot and Google-Extended affect whether your content shapes model knowledge but don’t directly control whether you appear in AI search results.

Test rendering without JavaScript. Disable JavaScript in your browser and check whether your key content is visible. If critical text, product details, or article bodies disappear, AI crawlers that don’t execute JS will see an empty or partial page. Server-side rendering or static rendering solves this problem at the architecture level.

Review your structured data coverage. Ensure pages have accurate, complete Schema.org markup that describes the content type, author, dates, and key entities. AI systems that extract structured data as a primary signal will miss pages that rely solely on unstructured HTML.

Check AI search surfaces directly. Query your target keywords in ChatGPT Search, Perplexity, and Google AI Overviews. Note which competitors appear and whether your content is cited. There is no equivalent of Google Search Console for most AI search engines yet, so manual checks remain necessary.

Prioritize by page value. Start with your highest-traffic and highest-converting pages. If those pages are invisible to AI search, the revenue impact grows as user behavior shifts toward AI-assisted search.

Watch out for

Blocking AI crawlers unintentionally. Some CDNs and bot management tools classify AI crawlers as scrapers and block them at the edge, before they ever reach your server. Check your CDN and WAF logs to confirm AI user agents are getting 200 responses, not 403s.

Assuming Google visibility equals AI visibility. A page ranking #1 in Google may not appear in any AI search result. These are separate systems with separate retrieval methods. Treat AI search audits as a distinct workstream from traditional SEO audits.