ChatGPT free tier triggers web search in only 10.8% of queries

Summary

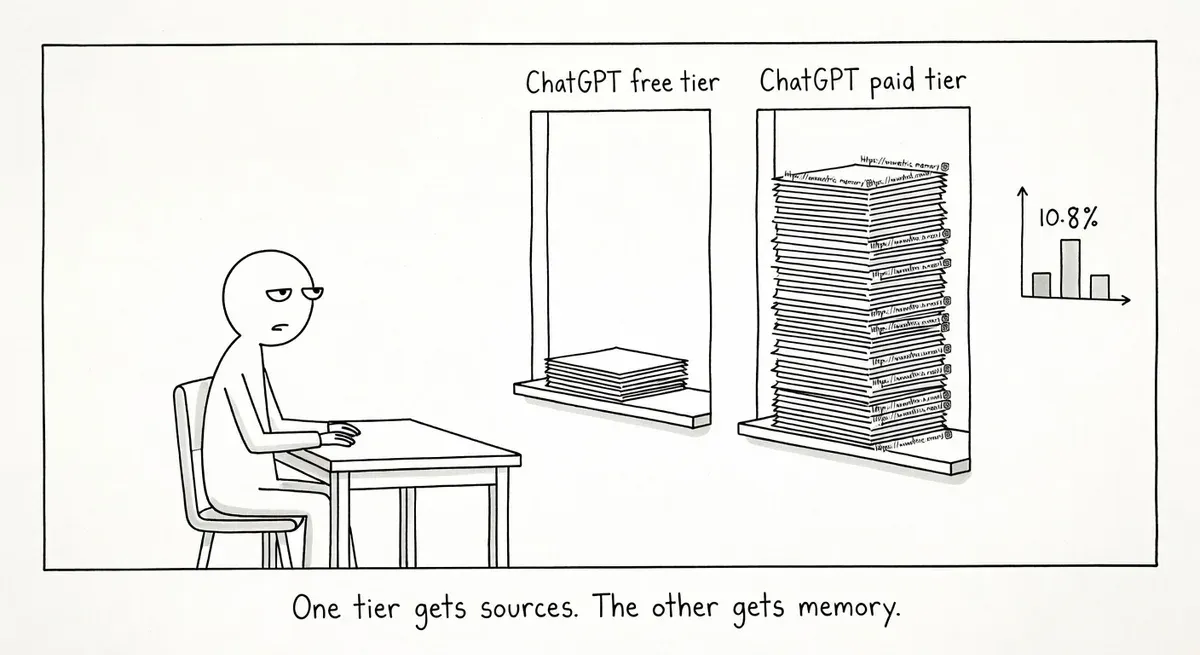

Free-tier ChatGPT triggered web search in only 10.8% of queries versus 47.4% for paid tiers, producing fewer citations and lower trustworthiness scores.

Free-tier users get answers with thinner evidence trails relying more on training data, making them vulnerable to stale or fabricated information circulating in AI content loops.

Test how free-tier ChatGPT answers your brand questions, then strengthen your parametric footprint by maintaining consistent, accurate information across authoritative sources.

What happened

Free-tier ChatGPT models triggered a live web search in just 10.8% of queries, compared to 47.4% for paid-tier models. That finding comes from a WordLift analysis of 56 ChatGPT enterprise SSE (server-sent events) traces published on May 4, 2026.

The researchers classified traces by the model slug exposed in the SSE stream. Traces using gpt-5-3-mini were treated as a free-tier proxy, while gpt-5-3 and gpt-5-5-thinking served as paid-tier proxies. Only 56 of 131 total enterprise traces had usable model-slug metadata. WordLift’s Andrea Volpini describes the sample as “small” and frames the results as “a directional signal, not a final verdict.”

The grounding gap extended beyond search frequency. Free-tier traces produced 0.93 URLs per 1,000 characters versus 3.38 for paid-tier. Citation density was 0.14 per 1,000 characters for free versus 0.78 for paid. A composite “trustworthiness proxy” score came in at 49.2 for free-tier traces and 76.8 for paid.

One finding the team did not expect: schema-related vocabulary appeared at nearly identical rates across both groups (35.1% free, 31.6% paid). Both model tiers could discuss structured data and machine-readability fluently. The difference was whether the model actually verified claims against live pages.

Why it matters

The practical gap here is evidence density, not fluency. A free-tier response can sound equally authoritative while citing fewer sources and relying more heavily on parametric memory. For brands, that means free-tier users may receive answers built on stale or incorrect information with no easy way to tell.

WordLift’s analysis found that 32.4% of free-tier traces were purely parametric, meaning no web search or retrieval happened at all. Only 5.3% of paid-tier traces behaved this way. When a model skips live retrieval, it falls back on whatever its training data contains. Old product descriptions, outdated positioning, or outright fabrications all become more likely to surface.

Lily Ray described the underlying feedback loop in her Substack piece on the AI Slop Loop. She documented how AI-generated misinformation enters training data and gets repeated until “repetition is treated as consensus.” Ray found Perplexity citing fabricated SEO news from AI-generated agency blog posts, including a nonexistent “September 2025 Perspective Core Algorithm Update.” When free-tier models search less, they become more vulnerable to exactly this kind of recycled misinformation.

The SSE stream schemas reinforce the behavioral data. Paid-tier traces exposed a richer orchestration layer with reasoning status fields, reasoning start/end times, and deliberation stages before answer assembly. Free-tier schemas were leaner, following a simpler prompt-to-answer flow with optional search.

For sites that depend on accurate brand representation in AI answers, the split matters. Most casual users are on free tiers. Those users get answers with thinner evidence trails and fewer citations back to primary sources.

What to do

The WordLift analysis suggests the gap is not about schema vocabulary but about whether models verify claims against live sources. Your structured data still matters, but it is not sufficient on its own.

Audit your brand’s parametric footprint. Ask free-tier ChatGPT questions about your brand, products, and key claims. Compare the answers against what paid-tier models return. Document where the free tier surfaces stale or incorrect information.

Strengthen signals that survive without live retrieval. When a model relies on training data rather than live search, the information it absorbed during training determines the answer. Consistent, accurate information across authoritative sources (your site, Wikipedia, industry publications) reduces the chance of hallucinated claims.

Keep structured data current on your pages. Both tiers showed similar schema awareness in vocabulary. The paid tier was more likely to check live pages. When it does check, accurate structured data on the page gives the model a machine-readable source of truth. Google’s structured data policies remain the baseline for markup quality.

Watch for the slop loop. Monitor whether AI-generated content about your brand is entering the broader web. If fabricated claims get repeated across enough low-quality sites, they can become the parametric “consensus” that free-tier models fall back on.

Watch out for

Overreading the sample size. The analysis covers 56 usable traces from enterprise accounts, not a direct comparison of free and paid consumer subscriptions. The model-slug classification is a proxy. Treat the specific percentages as directional rather than definitive.

Assuming schema markup alone closes the gap. The study found both tiers equally fluent in schema vocabulary. The problem is that free-tier models often skip the step where they would actually visit your page and read your markup. Schema helps when the model checks. It does not force the model to check.